Author: Denis Avetisyan

A new framework uses the inherent inconsistencies in AI-generated images to pinpoint even subtle manipulations with unprecedented accuracy.

Researchers introduce IFA-Net, leveraging masked autoencoders to amplify deviations from authentic image characteristics for robust forgery localization.

The increasing realism of AI-generated imagery presents a significant challenge to digital forensics, as current methods struggle to generalize beyond known forgery patterns. To address this, we introduce a novel framework, ‘Detecting AI-Generated Forgeries via Iterative Manifold Deviation Amplification’-IFA-Net-which shifts the focus from identifying “what is fake” to modeling image authenticity via a frozen Masked Autoencoder. By iteratively amplifying reconstruction failures in potentially manipulated regions, IFA-Net achieves state-of-the-art performance in localizing forgeries and demonstrates strong generalization across diverse manipulation types. Could this approach, grounded in the principle of natural image manifolds, pave the way for more robust and adaptable forensic tools in an era of increasingly sophisticated synthetic media?

The Erosion of Visual Truth: A Mathematical Imperative

The emergence of diffusion models represents a significant inflection point in the landscape of digital media, rapidly diminishing the reliability of conventional methods for verifying image authenticity. These generative algorithms, capable of producing remarkably realistic imagery from mere text prompts, are outpacing the ability of forensic tools to discern genuine content from synthetic creations. Unlike traditional forgeries that introduce detectable artifacts, diffusion models generate images that statistically mimic real-world photographs, rendering established detection techniques – reliant on identifying statistical anomalies – increasingly ineffective. This proliferation isn’t merely about creating “fake” images; it’s about eroding the very foundations of trust in visual information and presenting unprecedented challenges for legal, journalistic, and security applications dependent on verifying the provenance of digital content.

Historically, digital forgery detection centered on uncovering statistical anomalies – telltale signs of manipulation like inconsistent lighting or aberrant noise patterns. However, the advent of advanced generative techniques, particularly diffusion models, renders these established methods increasingly ineffective. These models learn the underlying distributions of real images with remarkable fidelity, allowing them to synthesize content that lacks the obvious statistical fingerprints of traditional forgeries. Because generated images statistically resemble authentic ones, conventional detectors struggle to differentiate between genuine and artificial content. This poses a significant challenge to current forensic practices, as the very foundation of anomaly detection is eroded by the increasing realism of synthetic media and necessitates a fundamental rethinking of how image authenticity is assessed.

Current digital forgery detection largely centers on pinpointing anomalies – the telltale imperfections introduced during manipulation. However, the escalating sophistication of generative models renders this approach increasingly ineffective, as synthetic media often lacks the obvious statistical inconsistencies of earlier forgeries. A promising alternative involves inverting this logic: instead of seeking what is wrong with an image, researchers are now focusing on building models that comprehensively represent what should be right. This means creating robust representations of natural image statistics and inherent real-world constraints, essentially defining a ‘plausibility space’ for authentic visuals. By assessing how closely an image conforms to this established model of ‘realness’, even subtle manipulations – those imperceptible to traditional methods – can be flagged as deviations from the expected norm, offering a more resilient defense against the rising tide of synthetic media.

Current forgery detection relies heavily on pinpointing anomalies – statistical inconsistencies that betray a manipulated image. However, the sophistication of modern generative models increasingly circumvents these methods. A promising alternative involves shifting the focus from detecting what is wrong to modeling what should be right. This approach leverages the complex, often subtle, patterns inherent in authentic images, essentially building a profile of ‘realness’. By learning the underlying structure and statistical distribution of natural scenes, algorithms can then assess the fidelity of a given image – not by searching for errors, but by measuring its deviation from this learned model of authenticity. This allows for the detection of even highly realistic manipulations that would otherwise evade traditional methods, offering a more robust defense against the rising tide of synthetic media.

IFA-Net: Amplifying the Signal of Imperfection

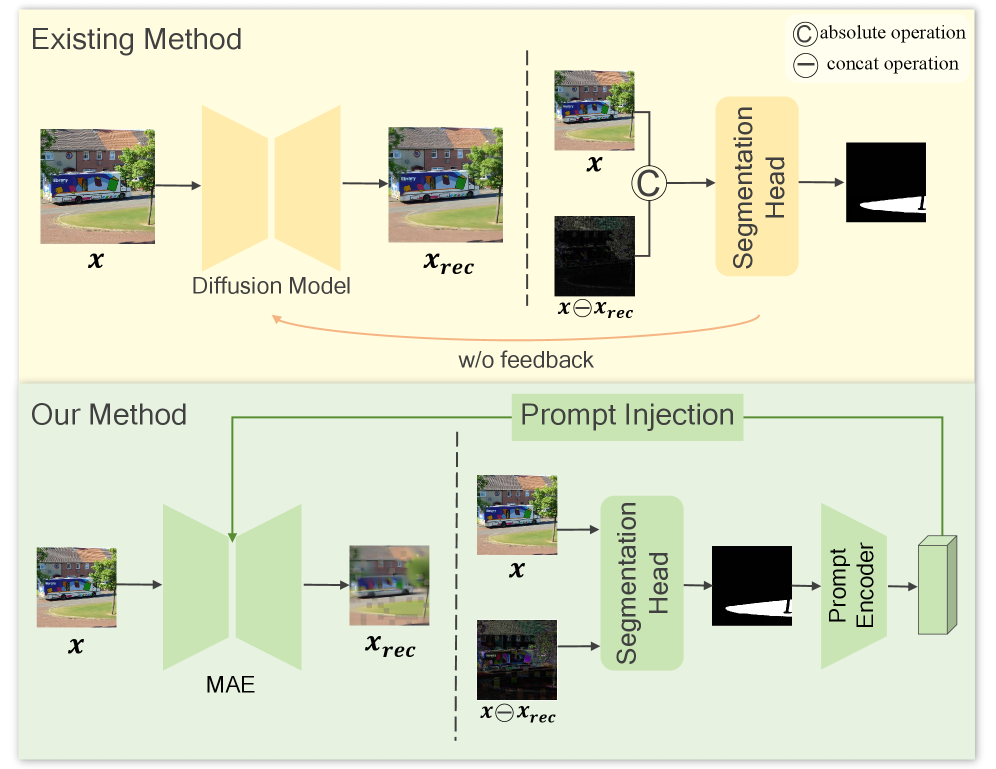

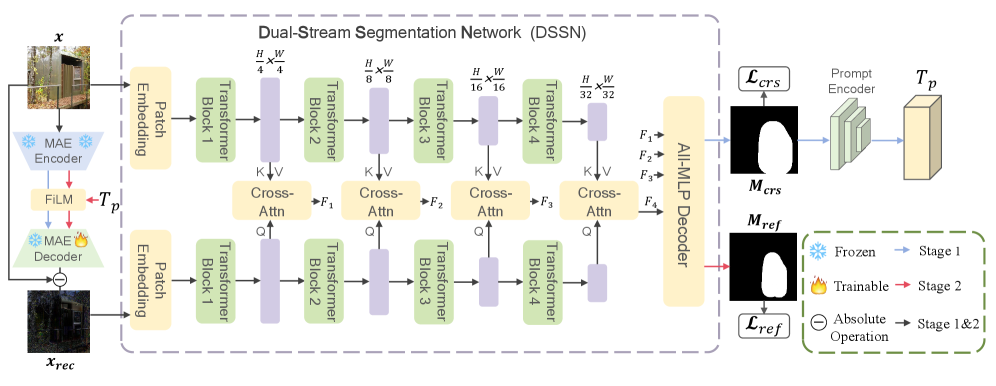

IFA-Net operates on the principle that perfect reconstruction of any image – authentic or forged – is fundamentally impossible due to inherent data loss during compression and encoding. The network leverages this asymmetry; authentic regions, while imperfectly reconstructed, exhibit predictable reconstruction errors, whereas manipulated areas demonstrate significantly greater and less predictable discrepancies. By modeling authenticity not as perfect reconstruction, but as a specific type of imperfect reconstruction, IFA-Net can effectively differentiate between natural image artifacts and those introduced by malicious alterations. This approach shifts the focus from detecting forgeries directly to identifying deviations from the expected reconstruction behavior of authentic content.

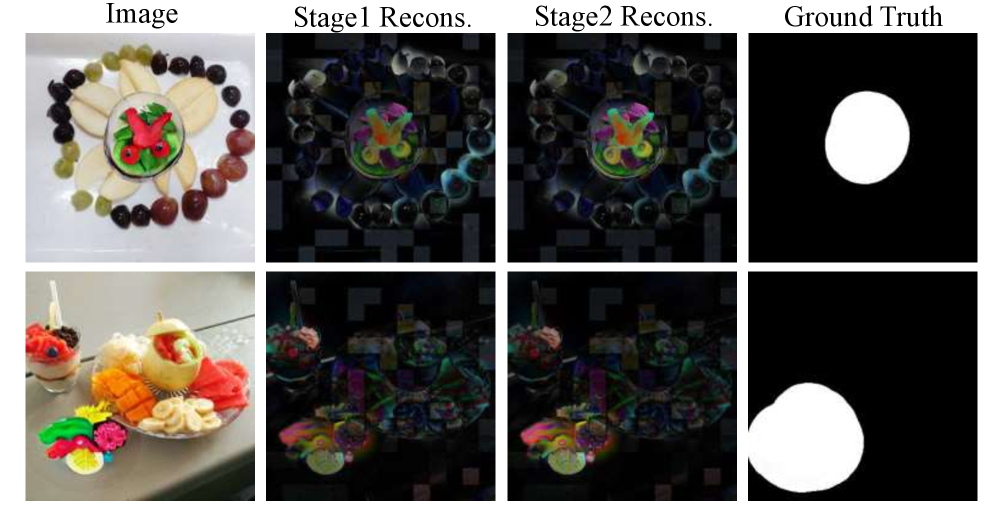

IFA-Net utilizes a two-stage architecture to enhance forgery detection. The initial stage performs a coarse prediction, generating a low-resolution assessment of the image to identify regions likely containing manipulations. This coarse prediction then guides the subsequent targeted reconstruction stage, focusing computational resources on these potentially forged areas. By prioritizing reconstruction efforts on identified anomalies, the network avoids unnecessary processing of authentic regions and increases sensitivity to subtle inconsistencies introduced by forgeries. This staged approach improves efficiency and accuracy compared to reconstructing the entire image at a high resolution.

IFA-Net utilizes an iterative refinement process to enhance the visibility of forgery-induced discrepancies. Each iteration involves reconstructing the input image, with subsequent iterations focusing on regions identified as potentially manipulated. This targeted reconstruction isn’t aimed at perfect replication, but rather at exacerbating the subtle inconsistencies inherent in forged areas. By repeatedly refining the reconstruction and concentrating on anomalies, IFA-Net effectively amplifies the signal of these discrepancies, increasing their prominence and facilitating more accurate forgery detection. The magnitude of the amplified signal is directly proportional to the subtlety of the initial forgery, enabling the network to identify manipulations that would otherwise be imperceptible.

Anomaly amplification within IFA-Net operates on the principle that perfect image reconstruction is theoretically impossible, particularly when applied to images containing forgeries. The reconstruction process, even with advanced algorithms, introduces residual errors. IFA-Net intentionally exploits these inherent limitations; subtle inconsistencies resulting from manipulations are magnified during iterative reconstruction stages. This amplification isn’t about creating anomalies, but rather about revealing those already present – discrepancies that would typically be obscured by noise or the fidelity of the forgery itself. By focusing reconstruction efforts on potentially altered regions, the network effectively increases the signal-to-noise ratio of these inconsistencies, making them more readily detectable by subsequent analysis.

Quantifying Authenticity: A Reconstruction Error Metric

IFA-Net utilizes reconstruction error as its primary indicator of image authenticity. This metric quantifies the difference between an input image and its reconstructed version, generated through the network’s internal processes. Specifically, the reconstruction error is calculated as the mean squared error MSE = \frac{1}{n} \sum_{i=1}^{n} (x_i - \hat{x}_i)^2 between the original pixel values x_i and the reconstructed pixel values \hat{x}_i for all pixels i in the image. A lower reconstruction error indicates a high degree of similarity between the original and reconstructed images, suggesting authenticity, while a higher error signals discrepancies potentially caused by manipulation or forgery.

The principle behind detecting image manipulation with reconstruction error lies in the expectation that authentic regions of an image will be accurately represented by the reconstruction process, resulting in low error values. Conversely, areas that have been altered – through splicing, cloning, or other techniques – will likely exhibit a greater discrepancy between the original and reconstructed pixels, manifesting as high reconstruction error. This is because the reconstruction process is trained on authentic image data; manipulated regions deviate from this training, leading to a less accurate reconstruction and, consequently, a higher error value. Therefore, localized areas of high reconstruction error serve as potential indicators of tampering, while consistently low error across the image suggests a higher probability of authenticity.

Real-world images frequently undergo processing that introduces distortions commonly found in digital media. Jpeg compression, a lossy compression technique, reduces file size by discarding image data, resulting in block artifacts and a general reduction in image fidelity. Similarly, Gaussian blur, often applied during image editing or as a natural consequence of camera motion, smooths image details. Both of these common distortions manifest as discrepancies between the original and reconstructed images when using reconstruction-error-based forgery detection methods; therefore, they can be misinterpreted as indicators of manipulation even in authentic images. This artificially inflated reconstruction error presents a challenge for accurate forgery detection, necessitating algorithms capable of differentiating between distortion-induced error and error stemming from actual image tampering.

IFA-Net achieves robustness to common image distortions, such as JPEG compression and Gaussian blur, through a targeted reconstruction methodology. Rather than attempting a pixel-perfect reconstruction of the entire image, the network prioritizes the accurate reconstruction of specific image regions. This is accomplished by focusing on areas where reconstruction error is most likely to signal a genuine forgery, effectively differentiating between error caused by malicious manipulation and error resulting from benign distortions. By selectively reconstructing key areas, IFA-Net minimizes the influence of common distortions on the overall authenticity assessment, improving the reliability of forgery detection.

Validation and Future Trajectories: Towards Provable Authenticity

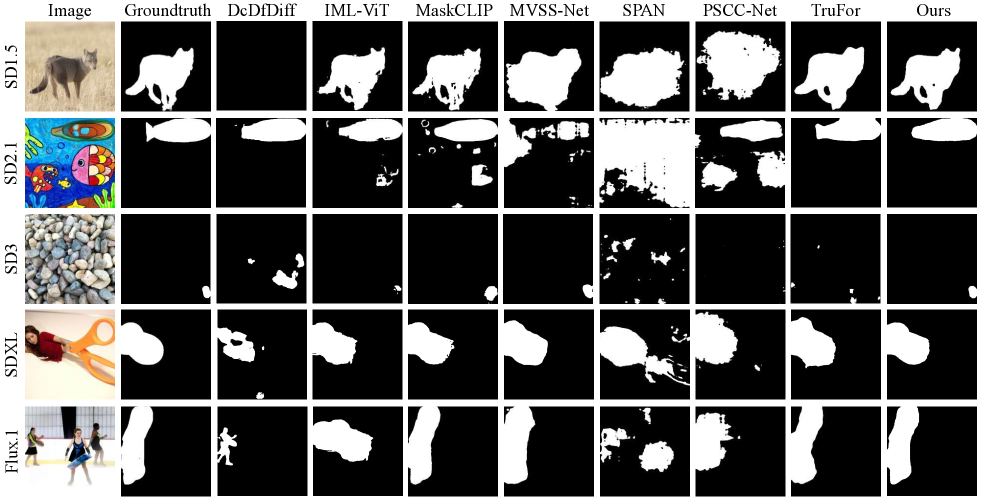

Rigorous evaluation of IFA-Net on the OpenSDID dataset confirms a substantial advancement in the accuracy of forgery detection when contrasted with currently established methodologies. This network demonstrably improves upon existing techniques, offering a more discerning capability to identify manipulated images. The comprehensive testing showcased IFA-Net’s capacity to effectively analyze digital content and pinpoint instances of tampering, suggesting a robust and reliable system for addressing the escalating challenges posed by increasingly sophisticated image forgeries and the spread of synthetic media.

IFA-Net demonstrates a substantial leap forward in identifying artificially altered images, achieving state-of-the-art results on established generative image tampering benchmarks. The network’s performance is quantified by an average Intersection over Union (IoU) of 0.778 and an F1-score of 0.855, metrics that measure the accuracy of forgery detection. These scores indicate a high degree of overlap between the predicted forgery regions and the actual manipulated areas within an image, coupled with a strong balance between precision and recall in identifying such alterations. The network’s ability to precisely locate and categorize these forgeries represents a significant advancement, providing a robust foundation for future developments in media authentication and forensic analysis.

Evaluations conducted on the GIT-AVG dataset reveal that IFA-Net significantly elevates the precision of forgery detection. Specifically, the network demonstrates a marked 10.8% increase in Intersection over Union (IoU), a metric evaluating the overlap between predicted and ground truth forgery regions, and an 8.5% improvement in the F1-score, which balances precision and recall. These gains indicate that IFA-Net not only identifies a greater proportion of forged areas correctly, but also minimizes false positives, representing a substantial step forward in the accuracy and reliability of image tampering detection systems. This enhanced performance suggests the network’s architecture effectively captures subtle artifacts introduced during manipulation, leading to more robust and dependable results.

Evaluation of IFA-Net on the OpenSDID dataset reveals a noteworthy performance level in identifying image forgeries, achieving an Intersection over Union (IoU) score of 0.487 and a corresponding F1-score of 0.620. These metrics indicate the network’s capacity to accurately localize and classify tampered regions within images, despite the challenges presented by real-world forgery techniques. While representing an advancement in forgery detection, these scores also highlight areas for continued refinement, particularly in enhancing the network’s ability to discern subtle manipulations and reduce false positives across diverse image datasets. This performance benchmark provides a crucial foundation for future development and optimization of IFA-Net, paving the way for more robust and reliable forensic tools.

Investigations are now directed toward broadening IFA-Net’s capabilities to encompass more sophisticated image manipulations, moving beyond current benchmarks to address techniques like semantic editing and advanced compositing. Simultaneously, research is underway to adapt the network’s architecture for the significantly more challenging domain of video forensics, where temporal inconsistencies and subtle artifacts require robust spatiotemporal analysis. This expansion into video will necessitate addressing issues of computational cost and developing strategies to effectively process sequential data, ultimately aiming to create a unified framework for detecting forgeries across both image and video mediums and bolstering defenses against increasingly realistic synthetic media.

The development of IFA-Net represents a significant step towards addressing the escalating challenge of synthetic media detection. As increasingly sophisticated forgery techniques emerge, current detection methods struggle to keep pace, leaving digital content vulnerable to manipulation and misuse. This innovative approach, however, demonstrates a capacity to discern subtle inconsistencies introduced during the creation of forged images, offering a pathway to build systems that are not only more accurate but also more resilient to evolving tampering methods. By achieving state-of-the-art performance on established benchmarks, IFA-Net establishes a foundation for future research focused on broadening the scope of detection to encompass complex manipulations and extending its capabilities to the realm of video forensics, ultimately fostering a more trustworthy digital environment.

The pursuit of identifying AI-generated forgeries, as detailed in this work with IFA-Net, echoes a fundamental tenet of computational rigor. It isn’t merely about achieving detection, but establishing a provable distinction between authentic and manipulated imagery. As David Marr stated, “Vision is not about perceiving the world as it is, but constructing a representation of it.” This framework, by amplifying subtle inconsistencies through the frozen Masked Autoencoder, isn’t simply recognizing what is forged, but deconstructing how the forgery deviates from a consistent internal representation of visual authenticity. The efficacy of IFA-Net stems from this focus on internal consistency-a mathematical purity-rather than superficial pattern matching, aligning with the principle that a robust solution must be fundamentally correct, not merely empirically successful.

Beyond the Amplification

The presented framework, while demonstrably effective in localizing forgeries via iterative manifold deviation, rests upon a curiously pragmatic foundation. The reliance on a frozen Masked Autoencoder, though computationally efficient, implicitly concedes a point: perfect reconstruction of natural images is not the objective, merely the amplification of existing discrepancies. A more rigorous approach would demand a formalization of image authenticity as an invariant – a property demonstrably preserved under a defined set of transformations, and violated by manipulation. This necessitates investigation into information-theoretic bounds on reconstruction error, establishing a principled threshold for anomaly detection rather than empirical tuning.

Furthermore, the current methodology addresses forgery localization, but not necessarily source attribution. While identifying the presence of manipulation is crucial, discerning the generative model employed – be it a specific Diffusion Model variant or a GAN architecture – presents a significant challenge. Future work should explore the integration of forensic watermarking techniques, not as explicit signals, but as latent constraints within the autoencoder’s reconstruction loss. This would impose a structural rigidity, rendering forgeries subtly distinguishable from authentic samples, even those crafted with adversarial intent.

Ultimately, the pursuit of robust forgery detection is not merely an engineering problem, but a mathematical one. The quest for an algorithm that ‘works well’ is insufficient; the objective must be a provably correct system, grounded in the fundamental principles of signal representation and information theory. The current work represents a step in that direction, but the path to a truly elegant solution remains asymptotically distant.

Original article: https://arxiv.org/pdf/2602.18842.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Gender Swaps Tech Jacket As Fans Question Major Comic Change

- Building Agents That Learn and Improve Themselves

- Gold Rate Forecast

- Silver Rate Forecast

- Games That Faced Bans in Countries Over Political Themes

- Trading Crypto with AI: A New Approach to Portfolio Management

- Superman Flops Financially: $350M Budget, Still No Profit (Scoop Confirmed)

- 22 Films Where the White Protagonist Is Canonically the Sidekick to a Black Lead

- 15 Films That Were Shot Entirely on Phones

- Unveiling the Schwab U.S. Dividend Equity ETF: A Portent of Financial Growth

2026-02-24 13:34