Nvidia, a name now uttered with the hushed reverence once reserved for botanical rarities, continues its reign over the silicon orchards of artificial intelligence. The Hopper bloomed, Blackwell bore fruit, and now, like a particularly ambitious hybrid, comes Rubin. One might ask, with a cynical lift of the eyebrow, if the market’s current infatuation with all things AI isn’t a touch…precipitous? The billions poured into data centers, a veritable deluge, are beginning to raise questions, and the technology itself, alas, is a fickle mistress, perpetually shifting its requirements. A moving target, indeed, for Nvidia and its competitors, a rather exhausting game of chase, don’t you think?

Rubin, presently in the throes of production, promises shipment later this year. But is this the moment, the precise juncture, to acquire a stake in this particular empire? Or is the crown, so dazzlingly displayed, about to slip from Nvidia’s grasp? The question hangs, a delicate, shimmering thing, like a dewdrop on a spider’s web.

Rubin: A Refinement of Purpose

Nvidia, you see, specializes in GPUs, those tireless engines of high-performance computation, adept at the laborious task of training AI models. A commendable skill, certainly, as OpenAI and its brethren conjure ever more elaborate digital constructs. However, the landscape is subtly altering. The demand is no longer solely for brute force, for the sheer, unrefined power of a drag racer. The focus is shifting, subtly but irrevocably, towards inference – the art of applying learned knowledge, of drawing conclusions, of, dare one say, thinking. Deloitte, with its penchant for pronouncements, predicts that inference compute will soon eclipse training. A curious inversion, wouldn’t you agree?

Consider, if you will, the analogy of an automobile. A GPU’s raw power is akin to a straight-line sprint, a fleeting burst of velocity. But inference is a different breed of computation, a different type of race altogether. It demands a more refined engine, one capable of navigating the winding, treacherous roads of sustained reasoning. Think of AI agents, those digital mimics of sentience, requiring not mere speed, but a delicate balance of efficiency and responsiveness. In this realm, raw power is often less valuable than elegant execution.

Rubin is not merely a single GPU, but a constellation of components – CPUs, GPUs, networking switches, interfaces – all harmoniously integrated into a silicon supercomputer. Nvidia, with a certain cunning, designed Rubin with inference firmly in mind. The Vera Rubin NVL72 server rack-mounted superchip, a rather grand title, promises inference computing at a mere 10% of the cost per million tokens compared to the current flagship Blackwell GB200 NVL72. A reduction in cost, you see, is always a pleasing thing, especially for those of us who appreciate a well-managed portfolio.

A Momentum Worth Maintaining?

The hyperscalers, those digital behemoths, are feeling the pressure to demonstrate a return on their extravagant AI investments. The improvements in inference efficiency, a rather understated triumph of engineering, will undoubtedly serve as a potent selling point for Rubin. Nvidia announced, with a characteristic lack of fanfare, a $500 billion order backlog through 2026. A considerable sum, wouldn’t you say? And likely to grow, of course, once the latest earnings are revealed on February 25th. Every hyperscaler, it seems, intends to increase its AI spending in 2026. A rather predictable outcome, perhaps, but reassuring nonetheless.

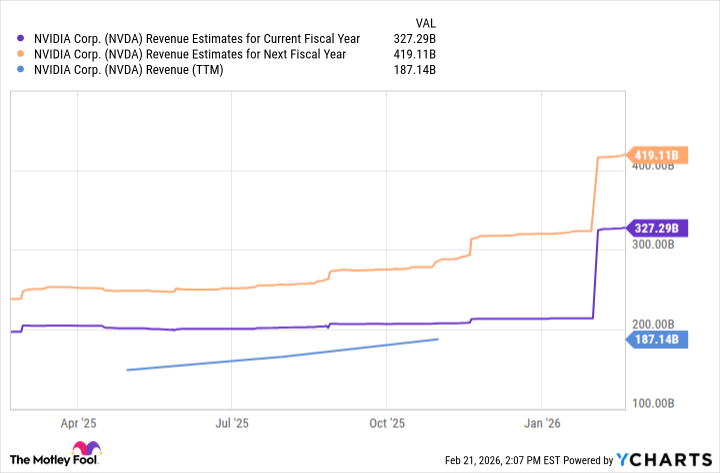

Wall Street analysts, those keen observers of the financial landscape, anticipate substantial growth for Nvidia. Estimates project the company’s trailing-12-month sales of $187 billion to swell to approximately $327 billion this fiscal year, and a further $419 billion the following year. Moreover, Nvidia has consistently exceeded Wall Street’s quarterly revenue estimates for the past three years. A rather impressive record, wouldn’t you agree? One wouldn’t be surprised if the actual numbers were even higher. A delightful prospect, for those of us who appreciate a healthy bottom line.

A Nibble, Perhaps?

Nvidia stock currently trades at nearly 25 times sales. If the company performs as expected, the valuation becomes considerably more palatable. Shares trade at a mere 11 times next year’s revenue estimate. It’s worth noting, however, that Nvidia relies on a rather concentrated customer base. Should one or more hyperscalers decide to curtail their AI spending, those estimates could swiftly unravel. A cautionary tale, perhaps, about the perils of over-reliance.

Even though Nvidia still commands the lion’s share of the installed base of AI data center chips, it must continue to fend off competition, especially if customers place greater emphasis on cost savings. Broadcom, with its strategic partnership with Alphabet on Tensor Processing Units, has already demonstrated a degree of success. A reminder, perhaps, that complacency is a luxury no company can afford.

Nvidia will likely remain a long-term AI winner, due to its entrenched leadership and the growth opportunities that lie ahead. However, a cautious approach is advisable. A full-scale investment might be premature. A nibble, perhaps, a small, considered addition to the portfolio. Or, at the very least, a patient wait until Nvidia unveils its latest outlook following the earnings report. A touch of prudence, you see, is always a virtue.

Read More

- Invincible Season 4 Gender Swaps Tech Jacket As Fans Question Major Comic Change

- Building Agents That Learn and Improve Themselves

- Gold Rate Forecast

- Silver Rate Forecast

- Games That Faced Bans in Countries Over Political Themes

- Trading Crypto with AI: A New Approach to Portfolio Management

- 15 Films That Were Shot Entirely on Phones

- Why Won’t It Just *Do* What You Ask? Unpacking the Quirks of AI Language

- 20 Movies Where the Black Villain Was Secretly the Most Popular Character

- Unveiling the Schwab U.S. Dividend Equity ETF: A Portent of Financial Growth

2026-02-24 00:32