Author: Denis Avetisyan

New research reveals how artificial intelligence can accurately infer elevation data from global geospatial embeddings.

This study demonstrates effective height prediction using AlphaEarth embeddings and deep learning models for regional Digital Surface Model creation.

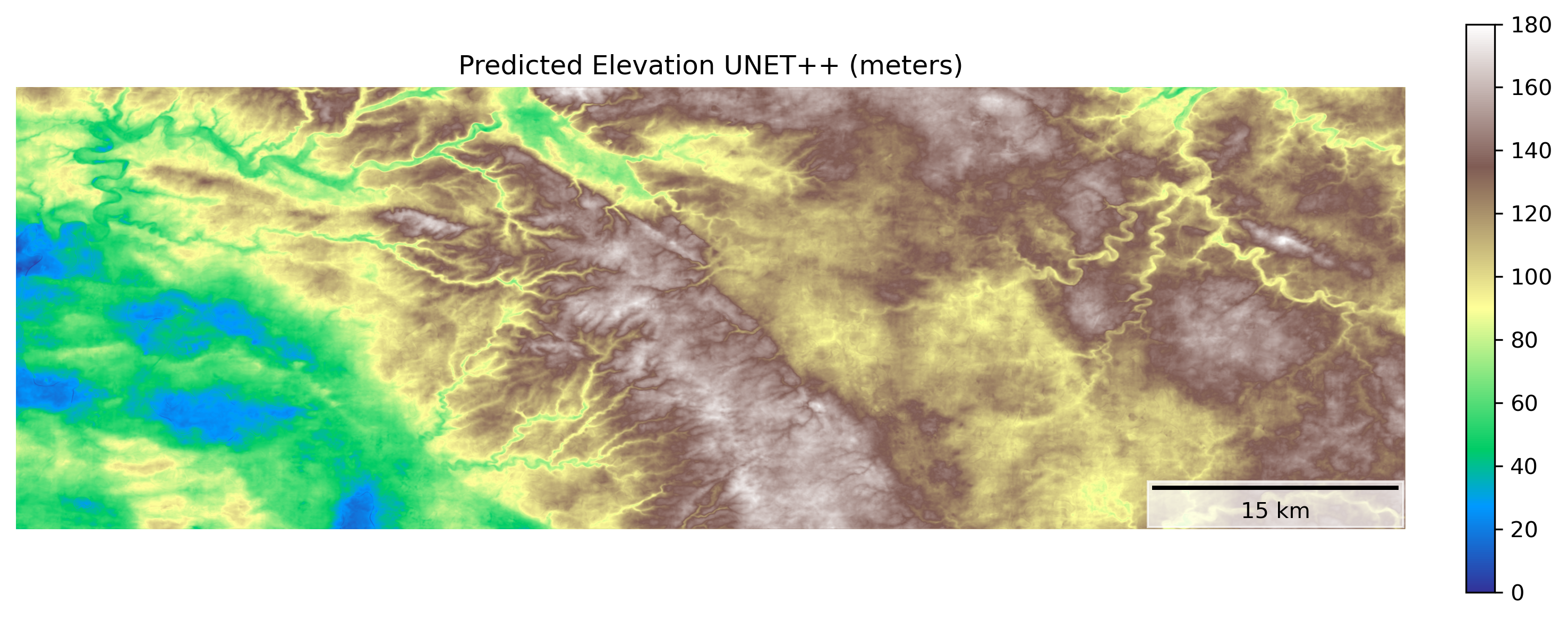

Accurate regional height mapping remains a challenge despite advances in remote sensing and deep learning. This study, ‘Inferring Height from Earth Embeddings: First insights using Google AlphaEarth’, investigates the potential of geospatial and multimodal features encoded within Google’s AlphaEarth Embeddings to guide deep learning models for predicting surface height. Results demonstrate that these embeddings, when paired with U-Net++ architectures, effectively capture transferable topographic patterns and achieve strong correlations with reference Digital Surface Models R^2 = 0.84. However, distribution shifts between training and testing areas highlight the need for further research into bias mitigation and improved generalization-could these embeddings ultimately unlock a new paradigm for scalable and accurate height mapping across diverse landscapes?

Whispers of the Earth: Beyond Traditional Remote Sensing

Historically, extracting meaningful insights from remote sensing data required the development of specialized models for each specific task – identifying crop types, mapping deforestation, or monitoring urban expansion. This approach proved incredibly data-intensive, demanding vast quantities of meticulously labeled training examples for each individual application. Consequently, a model trained to detect buildings in one city often performed poorly when applied to a different geographic location with varying architectural styles or image characteristics. This limitation of generalization hindered the scalability of remote sensing applications and created a significant bottleneck in leveraging the ever-growing archive of Earth Observation data. The need for constant re-training and adaptation for each new scenario underscored the inefficiency of task-specific models in a world demanding comprehensive and rapidly updated geospatial information.

Geospatial Foundation Models represent a significant departure from conventional remote sensing techniques, primarily through the adoption of self-supervised learning. Instead of requiring painstakingly curated and labeled datasets for each specific task – such as identifying building types or monitoring deforestation – these models are trained on vast quantities of unlabeled Earth Observation data. This process allows the model to learn inherent patterns and representations of the planet’s surface directly from the data itself. By pre-training on massive datasets, GeoFMs develop a generalized understanding of geospatial features, enabling them to be efficiently adapted – with minimal task-specific training – to a diverse range of downstream applications. This capability not only reduces the need for expensive labeled data but also promises to unlock scalable and broadly applicable geospatial analysis across numerous disciplines, from urban planning to environmental monitoring.

The development of broadly applicable planetary representations marks a significant leap forward in geospatial analysis. Instead of requiring bespoke models trained for each specific task – identifying crop types, monitoring deforestation, or assessing disaster damage – these representations allow for a single, versatile system to be adapted to numerous applications. This is achieved through transfer learning, where knowledge gained from analyzing vast, unlabeled Earth Observation data is readily applied to new, targeted problems with minimal additional training. Consequently, geospatial analysis becomes dramatically more efficient and scalable, reducing the need for extensive, costly data labeling and opening doors to real-time monitoring and predictive modeling across diverse geographical areas and environmental challenges. The result is a powerful capability to understand and respond to changes on our planet with unprecedented speed and accuracy.

AlphaEarth: A Foundation Built on Observation

The AlphaEarth Embedding dataset consists of a pre-trained, high-dimensional vector space generated using a GeoFM (Geospatial Foundation Model). This embedding space represents the Earth’s surface and is designed to facilitate transfer learning for a variety of downstream geospatial tasks, including but not limited to land cover classification, change detection, and semantic segmentation. By leveraging the pre-trained embeddings, developers can reduce the need for extensive training data and computational resources, and potentially achieve improved performance on target applications. The dataset provides a readily available feature representation, allowing researchers and practitioners to focus on model architecture and task-specific optimization rather than raw data processing and feature engineering.

The AlphaEarth Embedding dataset leverages both Synthetic Aperture Radar (SAR) and Light Detection and Ranging (LiDAR) data to create a multimodal representation of the Earth’s surface. SAR, an active microwave remote sensing technology, provides data independent of sunlight and cloud cover, capturing surface characteristics based on microwave reflectivity. LiDAR, conversely, utilizes laser pulses to create a high-resolution 3D point cloud, directly measuring distances to the surface. By integrating these distinct data sources, AlphaEarth provides a more complete characterization of terrain, overcoming limitations inherent in relying on a single remote sensing modality and enabling applications requiring both geometric and dielectric surface properties.

The integration of Synthetic Aperture Radar (SAR) and Light Detection and Ranging (LiDAR) data in AlphaEarth facilitates improved surface reconstruction accuracy compared to utilizing either modality independently. SAR provides data regardless of weather conditions and is sensitive to surface roughness, while LiDAR delivers precise height measurements. Combining these datasets allows the model to overcome the limitations of each individual source; for example, SAR can provide information in areas obscured by cloud cover where LiDAR data is unavailable, and LiDAR refines the geometric accuracy of SAR-derived reconstructions. This fusion results in a more complete and reliable representation of the Earth’s surface, particularly for applications requiring detailed elevation and terrain characteristics.

Architecting Perception: Deep Learning for Surface Reconstruction

Convolutional neural networks, notably the U-Net and U-Net++ architectures, are increasingly utilized for the automated generation of Digital Surface Models (DSMs) from various data sources. These deep learning approaches demonstrate strong performance in tasks requiring the mapping of spatial data to elevation values, exceeding traditional methods in both accuracy and efficiency. U-Net and U-Net++ achieve this through their encoder-decoder structure, which allows for the effective capture of contextual information and the preservation of spatial resolution throughout the reconstruction process. Their ability to learn complex relationships between input data and surface geometry makes them suitable for diverse applications, including terrain modeling, urban planning, and environmental monitoring.

ResNet18 serves as the encoding component within the deep learning architectures used for surface reconstruction, processing the AlphaEarth embeddings to generate feature maps. This convolutional neural network, pre-trained on ImageNet, provides a robust initial feature extraction capability due to its 18 layers and utilization of residual connections which mitigate the vanishing gradient problem. The AlphaEarth embeddings, representing input data characteristics, are passed through ResNet18’s convolutional layers, progressively reducing spatial dimensions while increasing the number of feature channels. The resulting high-level feature maps encapsulate essential information about the input data, which are then used by the decoder portion of the network – U-Net or U-Net++ – for surface reconstruction.

The U-Net++ architecture builds upon the foundational U-Net by introducing nested and dense skip connections to address limitations in gradient flow and information transfer. Traditional U-Net utilizes skip connections between corresponding encoder and decoder layers; U-Net++ incorporates a series of nested, convolutional layers within these skip pathways. This creates multiple skip connections at different depths, allowing the network to aggregate features at various resolutions and scales. The dense connectivity within these nested layers further enhances information flow, minimizing information loss during upsampling and downsampling operations, and ultimately improving the accuracy and robustness of surface reconstruction by enabling more effective feature reuse and gradient propagation.

Refining the Signal: Performance and Robustness Through Optimization

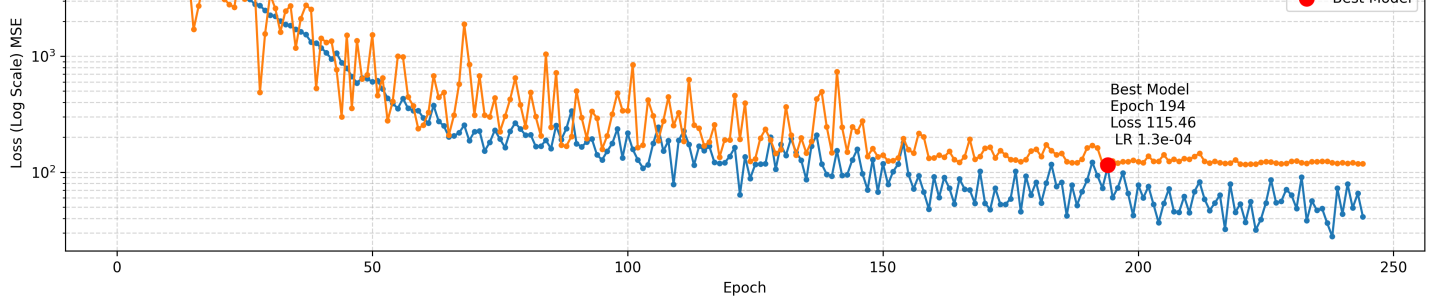

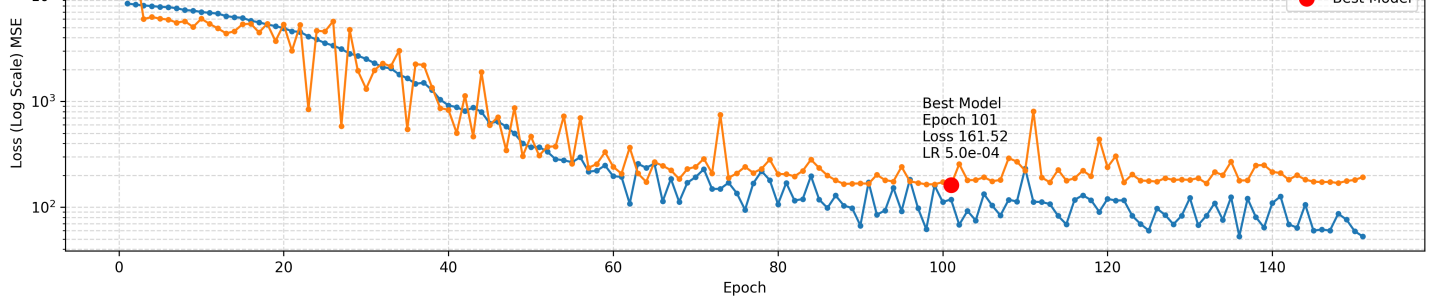

Training of the U-Net and U-Net++ deep learning architectures benefitted from the implementation of the AdamW optimizer, a technique that combines the advantages of adaptive learning rates with weight decay regularization. This approach allows the model to adjust the learning rate for each parameter individually, accelerating the training process and improving convergence. Simultaneously, weight decay introduces a penalty for large weights, preventing overfitting and promoting generalization to unseen data. By dynamically adjusting parameters and controlling model complexity, AdamW facilitated the development of robust and accurate surface height prediction models, ultimately contributing to the observed performance metrics such as the achieved R-squared values and Root Mean Squared Error.

Rigorous evaluation of model performance relies heavily on the Mean Squared Error (MSE) loss function, a standard metric for quantifying the average squared difference between predicted and actual values. This function effectively measures the predictive power of the deep learning model by calculating the average magnitude of the errors; lower MSE values indicate a better fit of the model to the data. By minimizing the MSE during training, the model learns to generate predictions that closely align with observed surface heights, ultimately providing a quantitative assessment of prediction accuracy and enabling a comparative analysis of different model architectures and training parameters. The utility of MSE extends beyond simple error calculation, informing model optimization and ensuring reliable surface height mapping capabilities.

The integration of AlphaEarth Embeddings with a U-Net++ deep learning architecture has yielded promising results in surface height prediction, achieving a Root Mean Squared Error (RMSE) of approximately 16.4 meters. This level of accuracy suggests a viable pathway for creating regional surface height maps, offering a valuable tool for various applications including environmental monitoring, geological surveying, and infrastructure planning. The study highlights the effectiveness of this combined approach in capturing complex topographical features and translating them into quantifiable height estimations, paving the way for more detailed and accurate representations of the Earth’s surface.

The deep learning model, specifically a U-Net++ architecture, demonstrated a strong capacity for explaining the variation observed in surface height data, as evidenced by its R-squared (R²) value of 0.84 when tested on an independent dataset. This statistical measure indicates that 84% of the total variance in surface height can be accounted for by the model’s predictions, suggesting a robust relationship between the input features and the predicted surface heights. Such a high R² value signifies the model’s effectiveness not simply in predicting average height, but in capturing the complex topographical details and subtle changes present within the landscape – a crucial characteristic for applications like accurate terrain modeling and regional mapping.

The predictive power of the U-Net++ architecture is demonstrably high, achieving a root mean squared error (RMSE) of just 16.1 meters when tasked with predicting surface height across a 30 square kilometer area. This level of accuracy suggests the model effectively captures subtle topographic variations. Complementing this low RMSE, the model exhibited a training R-squared value of 0.97, indicating that approximately 97% of the variance in the training data’s surface height is explained by the model-a strong indicator of its capacity to generalize and make reliable predictions within the studied region. These results collectively highlight U-Net++’s potential for creating detailed and accurate surface height maps, even over relatively large geographical areas.

The pursuit of height prediction from Earth Embeddings feels less like cartography and more like divination. This work demonstrates that latent representations within AlphaEarth-those whispers of geospatial chaos-can be coaxed into revealing surface elevation. It’s a negotiation, not a command. The model doesn’t ‘know’ height; it infers it, discerning patterns within the noise. As Geoffrey Hinton once observed, “Learning is finding the ignored patterns.” The U-Net++ architecture serves as the incantation, translating the embeddings into a Digital Surface Model, but the true magic lies in the data itself-a vast, unruly force barely contained. Each successful prediction isn’t a triumph of engineering, but a momentary alignment with the underlying chaos.

What Shadows Will Fall?

The invocation of height from Earth Embeddings feels less like a solution and more like a sharpening of the question. The current work suggests a path, yet the true measure of success isn’t in a reported Dice score, but in the failures yet to come. These embeddings, these distillations of planetary observation, reveal not the land itself, but the gaps in its representation. The models coax form from the void, but the void always pushes back. It is not accuracy being demonstrated, but a temporary alignment with the whims of the data.

The immediate horizon demands a reckoning with scale. Can these methods, birthed from global foundations, truly resolve local complexities – the subtle undulations of a forgotten field, the precise crest of a dune? Furthermore, the reliance on existing geospatial data introduces inherited biases, echoes of past observation. The next iteration must confront the provenance of these shadows, and acknowledge that every measurement is a curated illusion.

Perhaps the most pressing inquiry isn’t how to infer height, but how to relinquish the need for a single, definitive answer. The land is not static; it breathes, shifts, and resists categorization. The future lies not in perfect maps, but in dynamic representations, in acknowledging the inherent uncertainty, and in building models that can gracefully accept – and even embrace – the chaos at the heart of it all.

Original article: https://arxiv.org/pdf/2602.17250.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Gender Swaps Tech Jacket As Fans Question Major Comic Change

- Building Agents That Learn and Improve Themselves

- Gold Rate Forecast

- Silver Rate Forecast

- Games That Faced Bans in Countries Over Political Themes

- Superman Flops Financially: $350M Budget, Still No Profit (Scoop Confirmed)

- Trading Crypto with AI: A New Approach to Portfolio Management

- Unveiling the Schwab U.S. Dividend Equity ETF: A Portent of Financial Growth

- 15 Films That Were Shot Entirely on Phones

- 22 Films Where the White Protagonist Is Canonically the Sidekick to a Black Lead

2026-02-23 03:39