Author: Denis Avetisyan

A new machine learning framework dramatically speeds up simulations of how magnetism evolves in metals, opening doors to modeling complex magnetic phenomena.

This work introduces a scalable machine learning force-field approach for simulating the dynamical behavior of itinerant magnets, demonstrating improved efficiency for modeling spin textures and phase separation.

Modeling the complex, many-body interactions governing spin dynamics in itinerant magnets remains computationally challenging, hindering investigations of emergent phenomena. This work, ‘Machine-learning force-field models for dynamical simulations of metallic magnets’, introduces a scalable machine learning framework to efficiently and accurately simulate these systems, leveraging symmetry-preserving descriptors within a deep neural network potential. Demonstrations using the double-exchange model reveal novel nonequilibrium behavior, including anomalous coarsening and frozen phase separation, unattainable with conventional methods. Could this approach unlock a deeper understanding of magnetic materials and accelerate the design of advanced spintronic devices?

Unraveling the Complexities of Localized Spin Interactions

The advancement of spintronic devices-those leveraging electron spin rather than charge-hinges on a nuanced understanding of how introducing “holes” (missing electrons) affects the behavior of localized spins within a material. These holes, acting as positive charge carriers, fundamentally alter the magnetic interactions between neighboring spins, potentially inducing novel magnetic phases and functionalities. Precisely controlling this interplay is paramount; too few holes and the spins remain largely unaffected, while excessive doping can lead to magnetic disorder and diminished performance. Researchers are actively investigating how different doping levels influence the stability of specific magnetic textures, such as skyrmions, which hold promise for high-density data storage and low-power computing. Ultimately, mastering the connection between hole doping and localized spin dynamics represents a critical pathway toward realizing the full potential of spintronics and creating the next generation of magnetic devices.

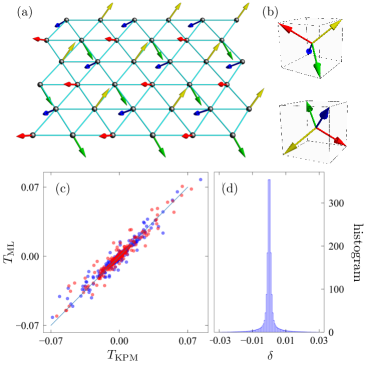

Conventional computational approaches to magnetism often falter when confronted with materials exhibiting strong electron correlations, specifically those hosting noncoplanar magnetic orders. These methods, frequently reliant on perturbative techniques or simplified exchange interactions, struggle to capture the intricate interplay between electron spin, charge, and orbital degrees of freedom that define these systems. The resulting inaccuracies hinder reliable predictions of magnetic ground states and dynamical properties. Noncoplanar orders-where magnetic moments align in complex, three-dimensional arrangements-pose a particular challenge, as their description requires accounting for competing interactions and frustrated geometries that lie beyond the reach of standard approximations. Consequently, a need exists for more sophisticated theoretical frameworks-such as dynamical mean-field theory or quantum Monte Carlo simulations-capable of accurately modeling these highly correlated electron systems and unlocking their potential for advanced spintronic devices.

The ability to accurately predict and control correlation-induced freezing represents a significant advancement in materials science. This phenomenon, arising from the intricate interplay of electron interactions within a material, transitions the system into a state where magnetic moments become locked in a disordered, yet stable, configuration. Capturing these electronic correlations-how electrons influence each other’s behavior-is not merely an academic exercise; it’s the key to engineering materials with tailored magnetic properties. Researchers find that conventional theoretical models often fall short in describing these complex interactions, leading to inaccurate predictions of freezing temperatures and magnetic configurations. Successfully modeling these correlations allows for the design of novel spintronic devices, potentially unlocking breakthroughs in data storage and processing, and providing a pathway towards materials exhibiting entirely new magnetic phases and functionalities.

A Pragmatic Machine Learning Framework for Itinerant Magnets

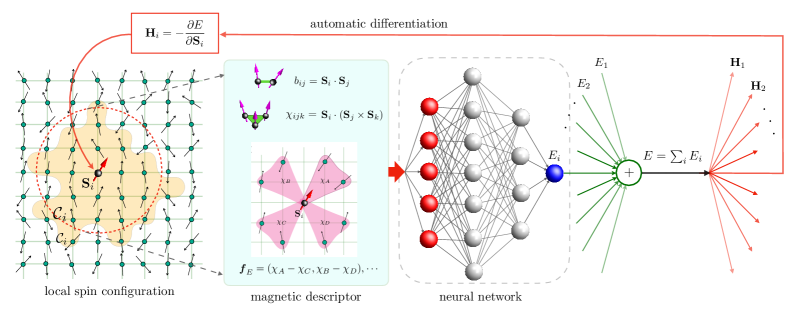

Conventional modeling of itinerant magnets relies on computationally intensive methods like Density Functional Theory (DFT) and exact diagonalization, limiting the accessible system sizes and timescales for simulation. To address these limitations, we have developed a Machine Learning (ML) force-field framework. This approach utilizes Neural Networks (NN) to directly predict local exchange fields – the interactions governing magnetic behavior – based on the electronic structure. By training the NN on data generated from accurate, but costly, first-principles calculations, the framework achieves a substantial reduction in computational expense while maintaining a high degree of accuracy, enabling simulations of larger systems and longer time scales than previously feasible.

The machine learning framework utilizes Neural Networks to directly predict local exchange fields, circumventing the need for computationally intensive first-principles calculations – typically Density Functional Theory (DFT) – which are required to determine these interactions from the electronic structure. Training data for the Neural Networks consists of pre-calculated exchange fields obtained from these accurate, but expensive, methods, applied to a representative set of atomic configurations. Once trained, the Neural Network acts as a surrogate model, providing predictions of local exchange fields with significantly reduced computational cost. This approach allows for the efficient calculation of magnetic interactions across a larger system size and timescale than is feasible with direct first-principles methods.

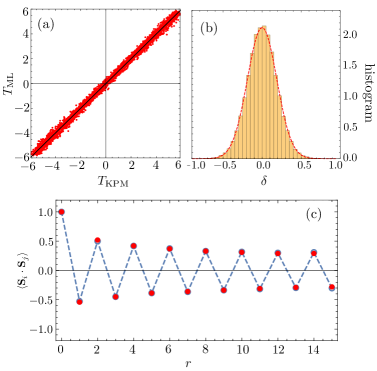

The developed machine learning framework facilitates large-scale simulations of the One-Band s-d Exchange Model by predicting local exchange interactions with significantly reduced computational cost. This efficiency enables investigations into complex magnetic states inaccessible to traditional methods like exact diagonalization. Benchmarking demonstrates a nearly 1000x speedup compared to exact diagonalization for equivalent system sizes, allowing for simulations of larger lattices and longer timescales, ultimately providing increased statistical accuracy and facilitating the study of finite-temperature effects and dynamic magnetic phenomena.

Symmetry-Preserving Descriptors: A Foundation for Accurate Predictions

The foundation of our predictive framework lies in Symmetry-Preserving Magnetic Descriptors generated through the application of Group Theory and Irreducible Representations (IRs). These descriptors are not simply numerical values, but rather mathematical constructs designed to represent the magnetic environment of a material in a manner that respects its underlying symmetries. Specifically, Group Theory provides the mathematical tools to identify and characterize these symmetries, while IRs offer a means to decompose the complex magnetic interactions into invariant components. This approach ensures that the descriptors transform predictably under symmetry operations, leading to more robust and physically meaningful predictions; the use of IRs effectively reduces the dimensionality of the problem by focusing on the essential, symmetry-adapted features of the magnetic environment.

The descriptors utilized in this framework are designed to maintain invariance under both lattice point-group and spin-rotation operations through the incorporation of Bond Variables and Chirality. Bond Variables define the geometric relationships between atoms, while Chirality accounts for the handedness of the local atomic environment. This construction ensures that the descriptors’ values remain consistent regardless of the orientation or rotation of the lattice or the spin, effectively eliminating spurious variations due to symmetry. Consequently, the model focuses on physically relevant features, improving the accuracy and generalizability of predictions concerning local magnetic environments and providing a more realistic representation of the system’s behavior.

Incorporating the E(3) Euclidean Group and Power Spectrum into the magnetic descriptors provides increased refinement for machine learning model predictions of local magnetic environments. The E(3) group accounts for translations and rotations, ensuring invariance to spatial transformations, while the Power Spectrum captures long-range correlations within the magnetic structure. This combination demonstrably improves prediction accuracy, resulting in a mean squared error of 1.64e-5 per spin – a metric calculated by comparing predicted magnetic moments to those obtained from reference data. This level of precision is crucial for applications requiring detailed understanding of magnetic interactions at the atomic scale.

Unveiling Dynamic Phenomena Through Simulation

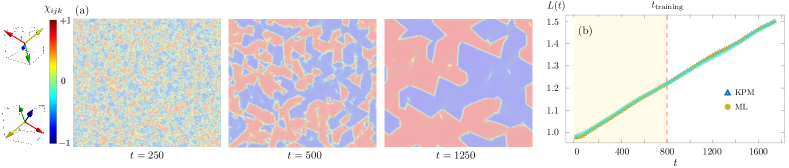

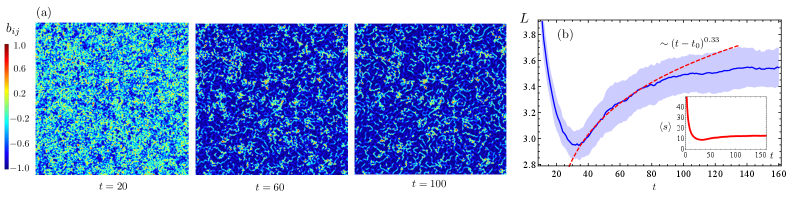

Dynamic simulations, leveraging a novel machine learning force-field framework, were conducted to investigate the temporal evolution of magnetic states within the material. Employing a Thermal Quench method – rapidly cooling the system from a high-temperature disordered state – researchers were able to observe how magnetic order emerges and propagates over time. This computational approach allows for detailed tracking of individual magnetic moments and their interactions, providing insights into the underlying mechanisms governing the system’s magnetic behavior. The simulations accurately model the energy landscape, enabling the study of complex phenomena inaccessible through traditional experimental techniques, and ultimately offering a pathway to predict and control magnetic properties at the nanoscale.

Dynamic simulations demonstrate that scalar chirality-a measure of the three-dimensional arrangement of spins-plays a critical role in governing the evolution of magnetic states and ultimately leads to correlated freezing. This phenomenon isn’t simply a slowing down of individual spins, but rather a cooperative transition where spins become locked in specific orientations due to their chiral interactions with neighbors. The simulations reveal that regions of high scalar chirality act as nucleation points for this freezing, propagating outward and establishing long-range magnetic order. This correlated behavior suggests that the system’s magnetic properties aren’t determined by individual spin characteristics, but emerge from the collective interplay dictated by this fundamental geometric property, offering a pathway to understand and potentially control complex magnetic phenomena.

The developed machine learning force-field framework demonstrates a crucial ability to model the Double-Exchange Mechanism, a fundamental interaction governing magnetic properties in materials. This capability establishes a direct connection between hole doping – the introduction of missing electrons – and the resulting magnetic behavior observed in simulations. Beyond enhanced physical accuracy, the framework offers a significant computational advantage, achieving a five-fold increase in speed compared to traditional simulations utilizing the Kernel Polynomial Method. This acceleration allows for more extensive explorations of complex magnetic systems and facilitates the study of dynamic phenomena at previously inaccessible timescales, ultimately promising advancements in materials design and discovery.

Towards a Rational Design Paradigm for Spintronic Materials

A novel machine learning force-field framework offers a powerful new avenue for investigating itinerant magnet materials, a class of compounds where magnetism arises from the behavior of conducting electrons. Traditional computational methods, such as density functional theory, are often computationally prohibitive when applied to the extensive compositional and structural search space required for materials discovery. This framework bypasses these limitations by learning the complex relationships between atomic structure and energy from a relatively small set of accurate calculations, enabling rapid and precise predictions of material properties. The resulting speed-up allows researchers to efficiently explore a vast landscape of potential materials, systematically varying parameters like chemical composition and crystal structure to identify candidates with desired magnetic characteristics-a process crucial for advancing spintronics and magnetic data storage technologies.

The ability to computationally screen and predict magnetic properties in materials hinges on a nuanced understanding of how subtle structural and compositional changes impact their behavior. Researchers are leveraging this principle by systematically altering key parameters – such as the concentration of ‘holes’ (missing electrons) and the underlying crystal lattice arrangement – within materials models. This controlled variation allows for the prediction of how these changes influence magnetic characteristics like magnetization strength, Curie temperature, and magnetic anisotropy. Consequently, it becomes possible to identify promising materials before physical synthesis, effectively tailoring magnetic properties to meet the specific demands of advanced technologies. This predictive capability accelerates the discovery of novel spintronic materials with enhanced performance and opens avenues for designing devices with customized functionalities.

The predictive power of this machine learning framework extends beyond material discovery, offering a pathway towards the deliberate engineering of next-generation spintronic devices. By accurately correlating material parameters with magnetic behavior, researchers can move past trial-and-error methods and instead design materials optimized for specific applications, such as high-density data storage, low-power computing, and advanced sensors. This rational design process promises to yield devices with demonstrably enhanced performance characteristics – increased speed, reduced energy consumption, and improved stability – ultimately accelerating innovation in fields reliant on manipulating electron spin. The ability to tailor material properties at a fundamental level represents a significant leap forward, potentially unlocking functionalities previously considered unattainable in spintronic technologies.

The pursuit of accurate material modeling, as demonstrated in this work concerning itinerant magnets, often reveals the limitations of any single predictive framework. The researchers successfully integrated machine learning with established physical models, acknowledging that computational efficiency gains aren’t achieved through perfect prediction, but through iterative refinement. This echoes Jean-Jacques Rousseau’s sentiment: “The more we are acquainted with ourselves, the more we are ashamed.” Data, in this case, isn’t the goal-it’s a mirror of human error. The model’s ability to capture complex spin textures and phase separation isn’t about arriving at a final truth, but about meticulously charting the boundaries of what remains uncertain, even what we can’t measure still matters-it’s just harder to model.

Where Do We Go From Here?

The demonstrated acceleration in simulating itinerant magnetism is, predictably, not the destination. It is merely a faster path around the same fundamental obstacles. The true limitation isn’t computational speed, but the fidelity of the underlying representation. These machine-learned force fields, however elegant, remain approximations – statistically smoothed interpolations between data points. The real test lies not in reproducing known behavior, but in predicting emergent phenomena outside the training manifold. The framework’s scalability is, therefore, a double-edged sword; it allows for exploration of larger systems, but also amplifies the consequences of systematic error.

Future work must prioritize rigorous uncertainty quantification. It is insufficient to report accuracy metrics on the training set; the relevant figure is the probability that the model’s predictions diverge catastrophically from reality in unexplored parameter space. Symmetry-preserving descriptors are a promising step, but even these are ultimately imperfect proxies for the true physics. A more fundamental challenge lies in developing descriptors that are informative about potential errors, not just predictive of ground state properties.

Perhaps the most pressing need is a shift in evaluation criteria. Success should not be measured by how closely a simulation resembles existing experiments, but by its ability to guide new ones. A model that predicts what isn’t there is, after all, more valuable than one that simply confirms what is already known. The goal is not to build a perfect mirror of nature, but a reliable compass for navigating its uncertainties.

Original article: https://arxiv.org/pdf/2602.18213.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Gender Swaps Tech Jacket As Fans Question Major Comic Change

- Building Agents That Learn and Improve Themselves

- Gold Rate Forecast

- Games That Faced Bans in Countries Over Political Themes

- Silver Rate Forecast

- Trading Crypto with AI: A New Approach to Portfolio Management

- 15 Films That Were Shot Entirely on Phones

- Why Won’t It Just *Do* What You Ask? Unpacking the Quirks of AI Language

- Unveiling the Schwab U.S. Dividend Equity ETF: A Portent of Financial Growth

- Thinking Before Acting: A Self-Reflective AI for Safer Autonomous Driving

2026-02-24 03:20