Author: Denis Avetisyan

A new study demonstrates the potential of deep learning to dramatically improve the detection of gravitational waves from merging black holes and neutron stars.

Convolutional neural networks applied to time-frequency maps generated via matched filtering offer a promising path to faster and more efficient gravitational wave data analysis.

The increasing complexity of gravitational wave data analysis, coupled with the limitations of traditional matched filtering, presents a substantial computational challenge for next-generation detectors. This is addressed in ‘Deep Learning Search for Gravitational Waves from Compact Binary Coalescence’, which investigates a hybrid approach leveraging Convolutional Neural Networks to efficiently detect signals from time-frequency maps generated by matched filtering. The study demonstrates comparable detection efficiency to standard methods, while offering a potentially more resource-efficient pipeline, particularly for signals with parameters not fully captured by existing template banks. Could deep learning-assisted searches provide a sustainable pathway for gravitational wave data analysis in the expanding era of multi-messenger astronomy?

Listening to the Universe: A New Window on Cosmic Events

The universe has historically been observed through electromagnetic radiation – light, radio waves, X-rays – but the recent, direct detection of gravitational waves offers a completely novel means of gathering cosmic information. These ripples in spacetime,

The detection of gravitational waves, subtle distortions in the fabric of spacetime, demands extraordinarily sensitive instruments and intricate analytical methods. Predicted by Einstein’s theory of general relativity, these ripples propagate across vast cosmic distances, arriving at Earth as incredibly faint signals. Detecting them requires detectors like LIGO and Virgo – massive interferometers capable of measuring changes in length smaller than a proton. However, even with such precision, isolating genuine gravitational wave signals from terrestrial and astrophysical noise is a monumental task. Sophisticated data analysis techniques, including matched filtering and advanced statistical modeling, are essential to tease out the weak signals, identify their sources – such as merging black holes and neutron stars – and confirm their origin from beyond our solar system. This complex interplay between advanced instrumentation and computational power is continuously refined, pushing the boundaries of observational astrophysics and offering unprecedented insights into the universe.

Detecting gravitational waves presents a considerable technical hurdle, as the signals are extraordinarily faint and easily obscured by a multitude of interfering factors. Current detectors, like LIGO and Virgo, are sensitive enough to register distortions smaller than a proton’s width, but this very sensitivity makes them susceptible to terrestrial and astrophysical noise. Instrumental noise arises from vibrations, thermal fluctuations, and even quantum effects within the detectors themselves, requiring constant calibration and sophisticated noise reduction techniques. Furthermore, differentiating genuine gravitational wave signals from complex astrophysical phenomena-such as merging black holes or neutron stars with asymmetrical mass ratios-demands intricate data analysis algorithms and robust statistical methods. Identifying a true signal necessitates disentangling it from the ‘noise floor’ created by these overlapping influences, a process akin to isolating a whisper in a hurricane, and continues to be a primary focus of ongoing research and detector upgrades.

Unveiling Hidden Signals: The Power of Matched Filtering

Matched filtering is a signal processing technique central to gravitational wave detection. The process involves cross-correlating the data stream from detectors – which contains both gravitational wave signals and noise – with a library of predicted waveforms. This correlation maximizes the signal-to-noise ratio (SNR) when the incoming signal matches a template in the library. Essentially, the technique identifies waveforms buried in noise by leveraging prior knowledge of the expected signal shape. The output of the matched filter is a time series representing the likelihood of a signal being present at each point in time, with peaks indicating potential detections. The SNR is quantified and used as a trigger for further analysis and verification of events.

Template banks are comprehensive libraries of simulated gravitational waveform signals, computationally generated by solving the Einstein field equations for a wide range of binary system parameters – including masses, spins, and distances. These waveforms represent the expected signal that a gravitational wave detector would observe as a binary system inspirals, merges, and rings down. The creation of these templates involves significant computational resources, as each waveform requires numerical relativity or post-Newtonian approximations to model the spacetime distortion. A template bank isn’t a single list; rather, it’s a multi-dimensional space of waveforms, densely populated to ensure a close match exists for any potentially detectable signal within the detector’s frequency range and sensitivity. The density of templates is critical; insufficient templates can lead to missed detections, while excessive redundancy increases computational cost.

The efficacy of gravitational wave detection is fundamentally limited by the precision and breadth of the waveform templates used in matched filtering. Incomplete or inaccurate templates can lead to missed detections, as a true signal may not sufficiently correlate with any template in the bank. Conversely, inaccurate templates introduce false positives, where noise is misinterpreted as a gravitational wave event. Template completeness requires accounting for the parameter space of possible source systems – including masses, spins, distances, and sky locations – while accuracy depends on the fidelity of the waveform models derived from solutions to

Sifting Through the Static: Identifying and Rejecting Glitches

Gravitational wave detectors are susceptible to non-astrophysical noise sources that can mimic or obscure true signals. A prevalent category of this noise consists of transient, short-duration glitches, with ‘Sine-Gaussian Glitches’ being particularly problematic. These glitches are characterized by a waveform resembling a damped sinusoid, and their amplitude and frequency can overlap with the expected signals from gravitational waves originating from sources like merging black holes or neutron stars. The presence of these glitches introduces false detections and reduces the overall sensitivity of the instrument, necessitating robust methods for their identification and removal during data analysis. Their brief duration and complex waveform make distinguishing them from genuine gravitational wave signals a significant technical challenge.

The Chi-squared test functions as a statistical metric to evaluate the compatibility between observed data and expected noise characteristics within gravitational wave detector output. This test quantifies the deviation of a signal from the expected noise distribution; signals exhibiting a high Chi-squared value – indicating a poor fit to the noise model – are flagged as potential glitches. By establishing a threshold, data segments exceeding this value are rejected, effectively removing transient noise artifacts. This process reduces the probability of falsely identifying noise as a gravitational wave event and thereby increases the confidence level associated with detected signals, improving the overall sensitivity and reliability of the detector.

Effective glitch classification directly impacts the reliability of gravitational wave detection by reducing false positive rates and enhancing detector sensitivity. False positives, arising from misidentified noise as signals, can lead to erroneous conclusions about astrophysical events; minimizing these requires precise differentiation between genuine waveforms and transient noise artifacts. Simultaneously, accurate classification allows for the subtraction or mitigation of glitch contributions from the data stream, effectively lowering the noise floor and increasing the probability of detecting weak, but valid, gravitational wave signals. This process relies on characterizing glitch properties – duration, amplitude, frequency content – to develop algorithms that can reliably distinguish them from expected signal morphologies, thus improving overall detector performance and data quality.

A New Era of Discovery: Deep Learning for Gravitational Wave Astronomy

The analysis of gravitational wave signals increasingly relies on the power of convolutional neural networks, specifically architectures like EasyResNet, applied to representations of detector data called ‘Time-Template SNR Maps’. These maps are created through a process known as matched filtering, which essentially compares the detector’s output to predicted waveforms of potential gravitational wave events. By feeding these visual representations of the data into a neural network, researchers are moving beyond traditional signal processing techniques. This allows the network to learn complex patterns and relationships within the data, ultimately enabling a more sensitive and accurate detection of gravitational waves. The method effectively transforms the problem of gravitational wave detection into an image recognition task, leveraging advancements in computer vision to unlock new insights from the cosmos.

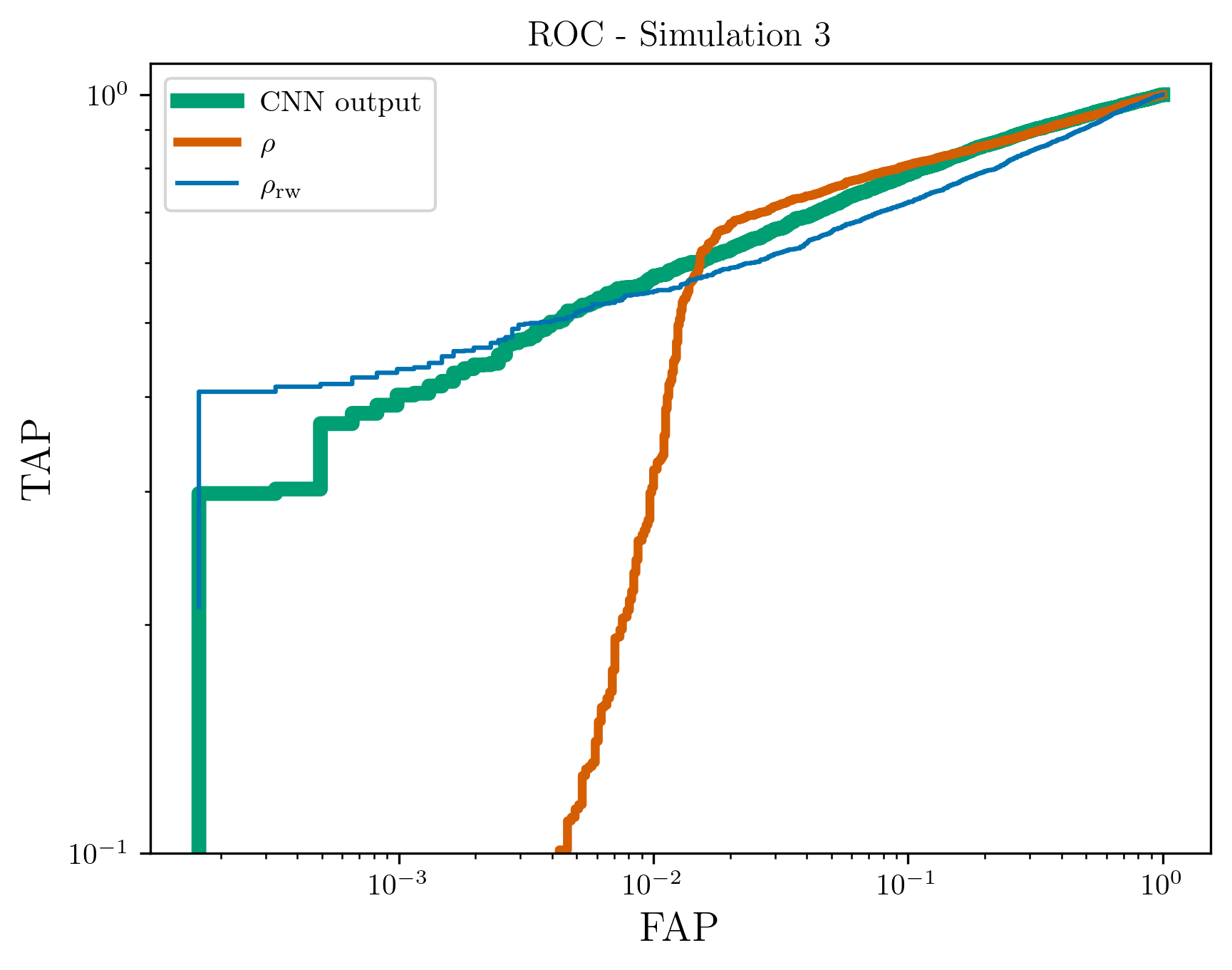

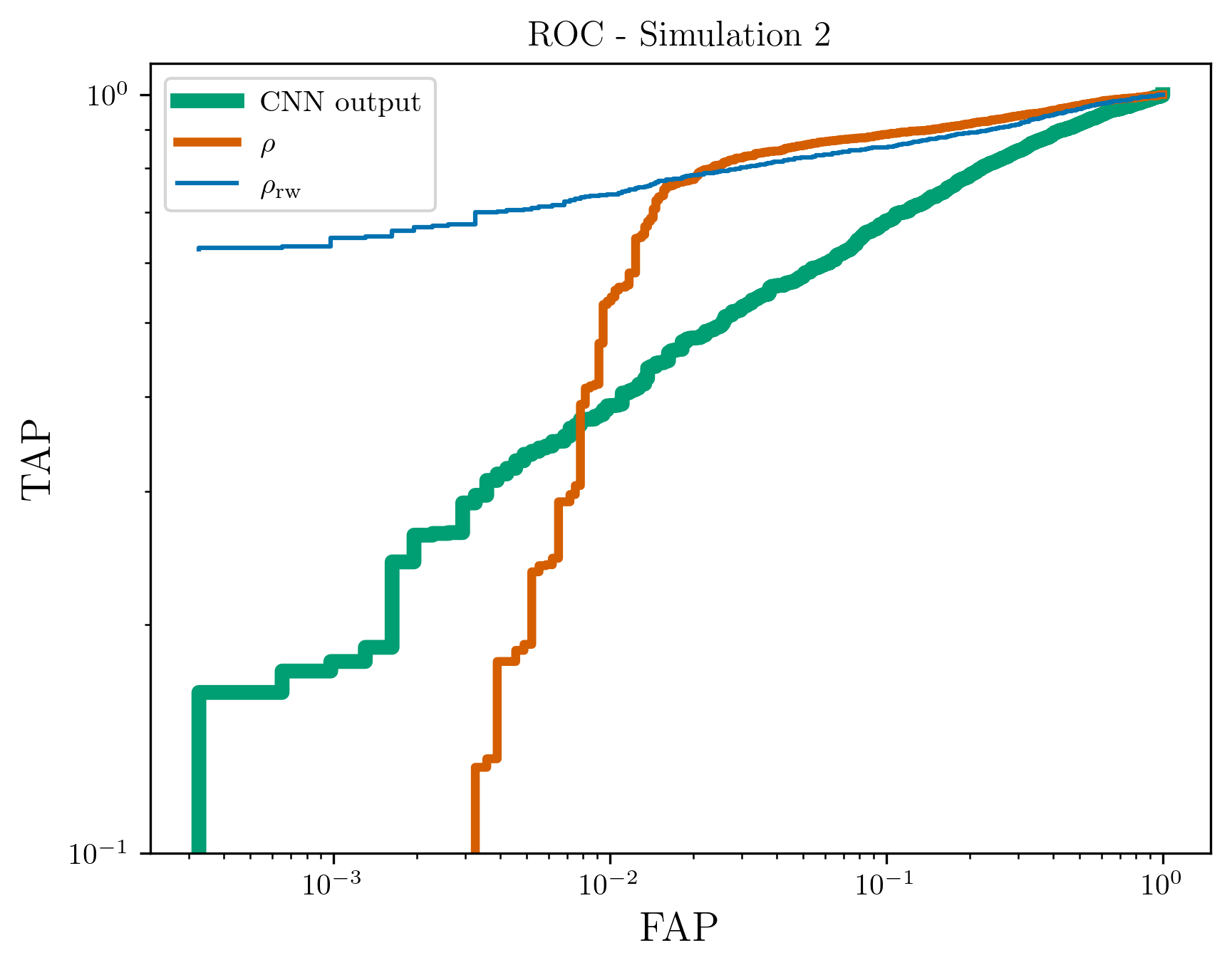

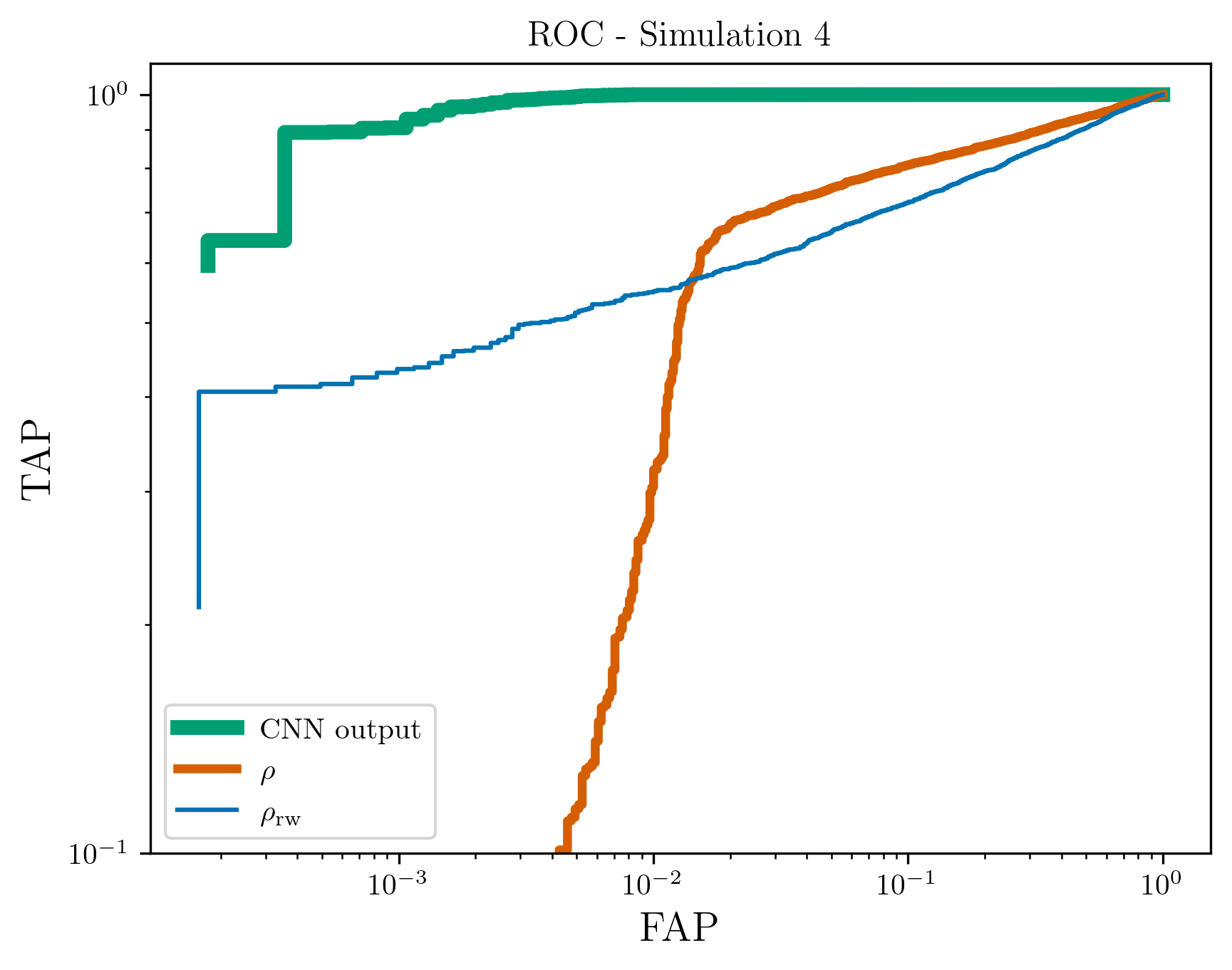

The implementation of convolutional neural networks for gravitational wave detection yields a demonstrably improved ability to distinguish true signals from background noise, as evidenced by consistently higher Receiver Operating Characteristic Area Under the Curve (ROC AUC) scores when compared to traditional statistics like

The efficiency of this deep learning approach-achieving an inference time of roughly 3 milliseconds per image utilizing standard CPU hardware-presents a compelling pathway for future gravitational wave detection. This speed is particularly crucial as third-generation detectors, with their increased sensitivity, are expected to generate substantially larger and more complex datasets. Consequently, traditional methods of signal analysis may become computationally prohibitive. This machine learning technique not only offers a viable alternative in terms of processing speed, but also holds the potential to dramatically reduce the size of template banks-the pre-calculated waveforms used to search for signals-without sacrificing detection capabilities. A smaller template bank translates to lower computational costs and increased sensitivity, ultimately enabling astronomers to probe a larger volume of the universe for gravitational wave events.

The application of machine learning techniques to gravitational wave astronomy promises a substantial broadening of detectable signals and, consequently, a deeper understanding of the universe. Traditional methods often rely on pre-defined signal templates, limiting detection to events closely matching those expectations; however, machine learning algorithms, particularly those adept at image analysis, can identify subtle patterns indicative of gravitational waves even when those waves deviate from modeled forms. This capability extends detection possibilities to more complex or unexpected astrophysical events, such as those involving exotic compact objects or non-standard cosmological models. Furthermore, by efficiently sifting through vast amounts of detector data, these algorithms facilitate the observation of weaker signals previously obscured by noise, effectively increasing the observable volume of space and allowing researchers to probe further into cosmic history.

Unlocking the Secrets of Spacetime: Source Properties and Beyond

The ripples in spacetime known as gravitational waves carry a wealth of information about their origins, allowing scientists to precisely characterize the properties of the cataclysmic events that create them. By meticulously analyzing these signals, researchers can determine the ‘chirp mass’ – a combined measure of the merging objects – and also discern the individual spins of the black holes or neutron stars, specifically focusing on how well these spins are aligned with the orbital axis. Furthermore, the waveforms reveal details about the orbit itself, including precession – the wobbling of the orbital plane – and eccentricity, which describes how elliptical the orbit is before the final merger. These parameters aren’t merely descriptive; they act as crucial clues, helping to unravel the formation history of these compact binary systems and providing stringent tests of Einstein’s theory of general relativity in the most extreme gravitational environments.

Gravitational wave signals aren’t simple tones; they carry complex harmonics, much like sound. While the dominant quadrupole mode reveals the basic ‘loudness’ and frequency of a merging black hole or neutron star pair, higher-order modes-subtle overtones in the gravitational wave spectrum-offer a far richer description of the event. These modes are exquisitely sensitive to the source’s geometry, including asymmetries in the masses or spins of the merging objects, and the precise shape of their orbits. Analyzing these higher-order modes allows scientists to reconstruct a more complete picture of the collision, effectively ‘seeing’ the shapes and orientations of the colliding bodies, and providing crucial tests of

Detailed analyses of gravitational waves are revolutionizing the understanding of compact binary systems – neutron stars and black holes locked in a fatal embrace. By precisely measuring parameters like spin and orbital eccentricity, scientists are gaining unprecedented insights into how these systems form and evolve, testing the limits of Einstein’s theory of general relativity in extreme gravitational fields. These observations aren’t merely confirming existing models; they are revealing previously unknown details about stellar death, the environments where black holes are born, and the very fabric of spacetime itself. The data allows for stringent tests of general relativity, potentially revealing deviations that could point towards new physics beyond our current understanding, and offers a unique window into the universe’s most energetic and mysterious phenomena.

The pursuit of gravitational wave detection, as detailed in this research, echoes a fundamental principle of responsible innovation. The application of deep learning to signal processing, specifically through Convolutional Neural Networks and Time-Frequency analysis, isn’t merely a computational advancement, but a re-evaluation of how knowledge is encoded and retrieved. As Epicurus observed, “It is not the pursuit of pleasure itself that is wrong, but the struggle to attain it.” Similarly, the efficiency gained through these algorithms isn’t an end in itself; it’s the capacity to more effectively discern meaningful signals from the noise, ensuring that the acceleration of discovery is guided by a direction-the expansion of understanding-and not simply speed. This approach exemplifies the need for ethics to accompany progress, especially when dealing with complex data analysis and the interpretation of subtle phenomena.

What Lies Ahead?

The pursuit of gravitational wave astronomy increasingly relies on algorithms to discern whispers from the cosmos. This work, while demonstrating the promise of deep learning for signal detection, implicitly highlights a critical juncture. The efficacy of these networks is fundamentally tied to the data upon which they are trained – a constructed reality of anticipated signals. The system excels at finding what it knows to look for, yet the universe, predictably, resists complete categorization. The true challenge isn’t simply increasing detection efficiency, but developing systems capable of recognizing the genuinely novel, the signals that fall outside pre-defined parameter spaces.

Future efforts will likely focus on hybrid approaches, integrating the strengths of traditional matched filtering with the adaptive capabilities of deep learning. However, the ethical dimension of automated discovery demands attention. Each algorithm encodes a particular worldview, a set of assumptions about what constitutes a ‘signal’ versus ‘noise’. Transparency is minimal morality, not optional, as these systems begin to shape the very contours of astronomical knowledge.

Ultimately, the question isn’t whether machines can find gravitational waves, but what kind of universe these algorithms will allow humanity to perceive. The automation of discovery creates the world through algorithms, often unaware, and the responsibility for the resulting picture rests squarely with those who construct them.

Original article: https://arxiv.org/pdf/2603.09386.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- 20 Movies Where the Black Villain Was Secretly the Most Popular Character

- Celebs Who Narrowly Escaped The 9/11 Attacks

- 25 “Woke” Films That Used Black Trauma to Humanize White Leads

- Top 20 Dinosaur Movies, Ranked

- Transformers Under the Microscope: What Graph Neural Networks Reveal

- The 10 Most Underrated Jim Carrey Movies, Ranked (From Least to Most Underrated)

- 22 Films Where the White Protagonist Is Canonically the Sidekick to a Black Lead

- Silver Rate Forecast

- Gold Rate Forecast

- The Best Directors of 2025

2026-03-11 20:28