Author: Denis Avetisyan

A new benchmark reveals the current limitations of graph neural networks when tackling complex constraint satisfaction problems, demonstrating they lag behind traditional algorithms at scale.

This study presents a comprehensive performance evaluation of graph neural networks on constraint satisfaction problems, analyzing their behavior relative to established solvers and algorithmic thresholds.

Claims of superior performance for graph neural networks (GNNs) on hard optimization problems often lack rigorous validation. This is addressed in ‘Benchmarking Graph Neural Networks in Solving Hard Constraint Satisfaction Problems’, which introduces new, statistically informed benchmarks derived from random constraint satisfaction problems (CSPs). Our comparative analysis reveals that, despite recent advances, classical algorithms currently outperform GNNs on these challenging instances, particularly as problem scale increases. Will future architectural innovations or training strategies enable GNNs to overcome these limitations and unlock their potential for solving complex CSPs?

The Cracks in Established Logic

Despite decades of refinement, established algorithms for tackling Constraint Satisfaction Problems (CSPs)-such as WalkSAT and Survey_Propagation-begin to falter as problem complexity escalates. These methods, while frequently successful on moderately sized instances, encounter significant difficulties with the dramatic increase in search space characteristic of larger, more intricate problems. The core limitation stems from their reliance on local search or message passing, techniques prone to becoming trapped in suboptimal solutions or overwhelmed by computational demands. As the number of variables and constraints grows, the ‘energy landscapes’ of these problems become increasingly ‘glassy’ – riddled with local minima that impede progress towards a globally optimal solution, effectively hindering the algorithms’ ability to efficiently explore the solution space and find valid assignments.

Classical algorithms tackling Constraint Satisfaction Problems (CSPs) frequently encounter difficulties not due to inherent flaws in the algorithms themselves, but because of the problem structure. Hard CSP instances often exhibit what is known as a ‘glassy’ energy landscape – a highly irregular surface with numerous local minima. As the algorithm attempts to find the optimal solution – the lowest point in this landscape – it can become trapped in these local minima, mistaking them for the true solution. Each step taken to improve the solution actually increases the overall cost, effectively halting progress. This phenomenon, combined with the exponential growth of computational demands as problem size increases, renders these methods computationally intractable for many real-world applications, despite their continued success at smaller scales.

Progress in applying machine learning to Constraint Satisfaction Problems is hampered by a critical lack of universally accepted benchmarks. While novel algorithms demonstrate promise in limited scenarios, a direct comparison to established methods like WalkSAT and Survey Propagation remains difficult due to inconsistent testing frameworks and datasets. This situation allows classical algorithms to maintain surprisingly high success rates even as problem scales increase, effectively raising the bar for new approaches and obscuring genuine advancements. The absence of standardized evaluation metrics makes it challenging to objectively assess whether machine learning techniques truly outperform-or even equal-the efficiency and robustness of these well-tuned, traditional solvers, thereby slowing the adoption of potentially transformative technologies.

Mapping the Chaos: A New Benchmark for Robustness

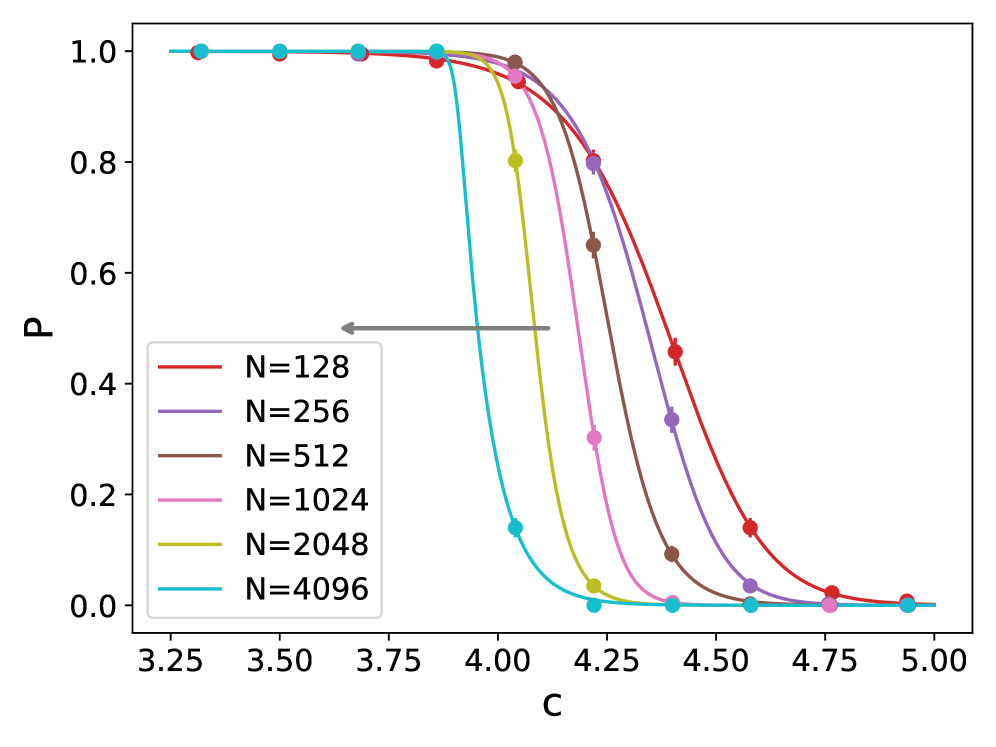

The newly introduced benchmark utilizes principles from statistical physics to construct problem instances. Specifically, it draws upon the concepts of phase transitions and energy landscapes to model problem hardness. Phase transitions, analogous to those observed in physical systems, represent points where solver performance undergoes a significant and often abrupt change. The benchmark’s construction allows for the systematic variation of parameters influencing the ‘energy landscape’ of the constraint satisfaction problem (CSP), effectively controlling the density of solutions and the ruggedness of the search space. This approach enables researchers to investigate solver behavior not simply based on problem size, but also in relation to these key characteristics of the problem’s structure, offering a more nuanced evaluation than traditional benchmarks.

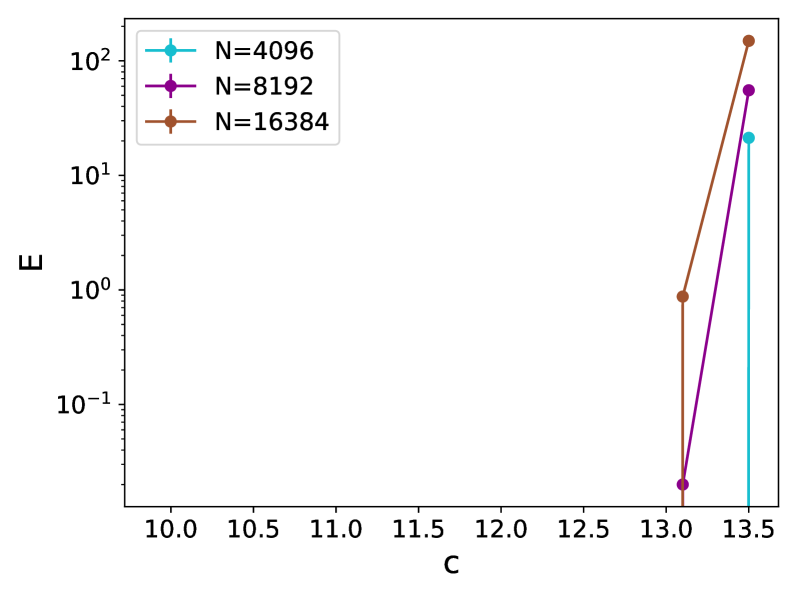

The benchmark utilizes a multi-dimensional difficulty structure, moving beyond simple scaling of problem size. Difficulty is modulated through adjustable parameters that directly influence the constraints and relationships within each problem instance, thereby controlling the inherent hardness independent of the number of variables or clauses. This allows for the creation of problem sets at specific difficulty levels, enabling a fine-grained analysis of solver performance across a spectrum of challenges. By systematically varying these parameters, researchers can isolate the impact of specific problem characteristics on solver efficiency and identify performance bottlenecks, rather than solely observing scaling behavior with increasing size.

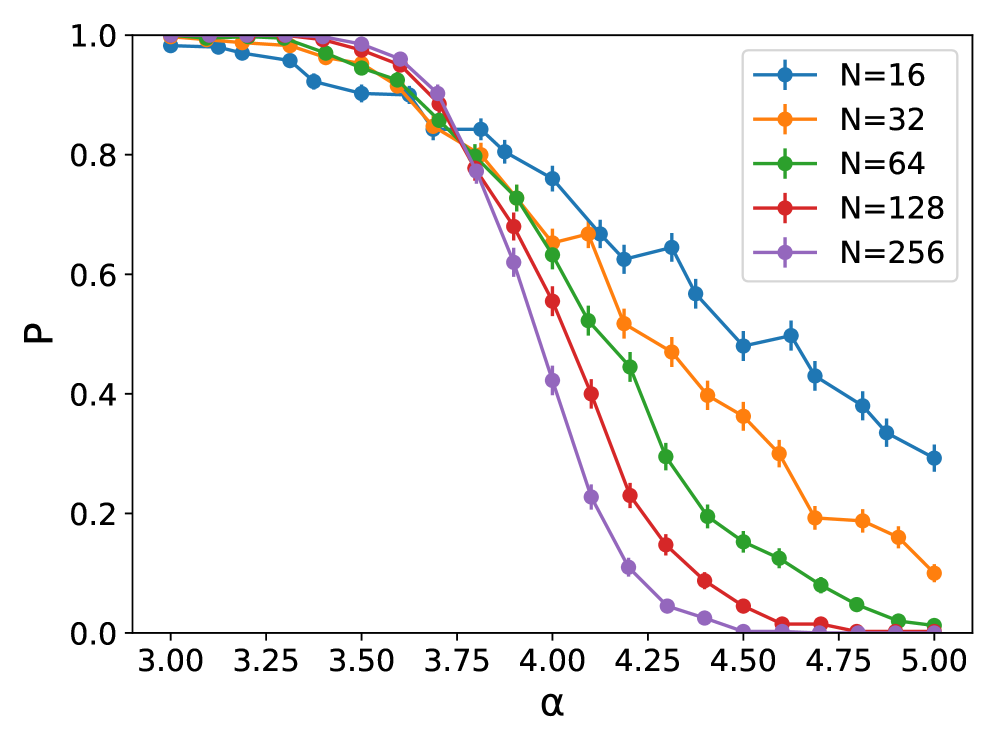

Current Constraint Satisfaction Problem (CSP) benchmarks often lack the granularity needed to effectively evaluate solver performance across the full spectrum of difficulty. Existing benchmarks typically scale difficulty solely by increasing problem size, failing to isolate the impact of inherent problem structure on solver efficiency. This new benchmark addresses this limitation by introducing parameters that directly control problem hardness, independent of size. This allows for detailed analysis of solver behavior as problems approach algorithmic thresholds – points where performance degrades significantly – which is a critical area for understanding solver limitations and guiding future development. Assessing performance near these thresholds provides a more representative evaluation than simply measuring success on easily solvable instances.

Whispers of Intelligence: Graph Neural Networks as Solvers

Recent advancements in machine learning, particularly within the field of Graph Neural Networks (GNNs), are presenting novel approaches to solving Constraint Satisfaction Problems (CSPs). Traditionally addressed with algorithms like backtracking search, constraint propagation, and local search, CSPs are now being tackled with learned heuristics. GNNs are well-suited to this task due to their ability to directly process graph-structured data, representing the constraints and variables inherent in CSP instances. This allows the network to learn relationships between variables and predict variable assignments or constraint violations. Current research focuses on architectures that can generalize across different CSP instances and problem sizes, potentially offering improved performance and scalability compared to hand-crafted algorithms, though significant challenges remain in achieving state-of-the-art results on complex, large-scale problems.

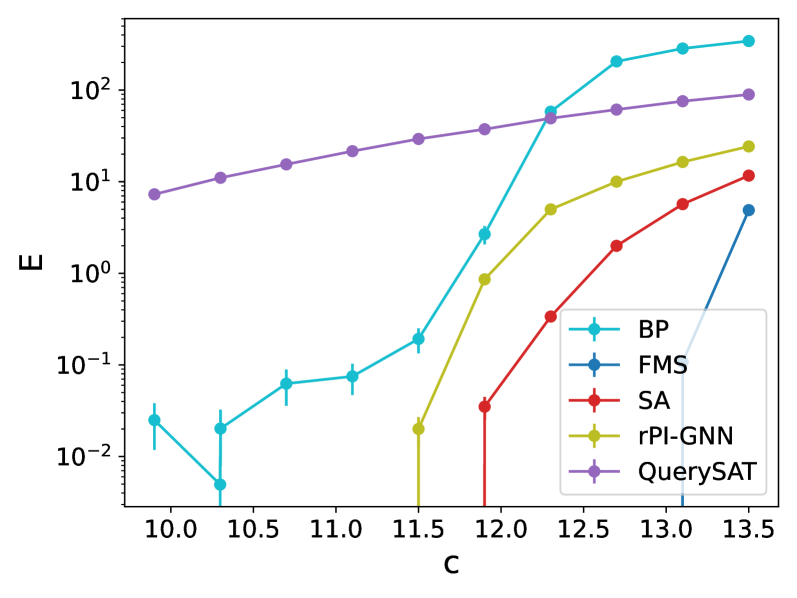

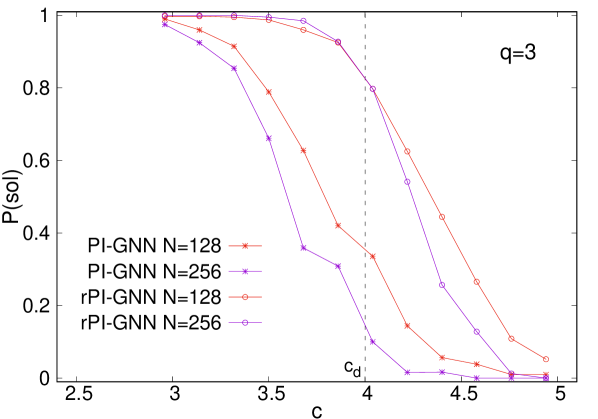

Graph Neural Networks (GNNs) such as NeuroSAT, QuerySAT, and rPI_GNN represent a novel approach to Constraint Satisfaction Problem (CSP) solving by leveraging learned heuristics. These models operate on the problem’s graph representation, iteratively refining variable assignments based on node features and message passing between neighboring nodes. NeuroSAT, for example, predicts variable assignments based on clause satisfaction, while QuerySAT utilizes a query-based approach to guide search. rPI_GNN incorporates a probabilistic inference mechanism to improve solution quality. Through training on diverse CSP instances, these GNNs aim to acquire strategies for effectively pruning the search space and identifying promising solution candidates, potentially exceeding the performance of hand-crafted heuristics in specific problem domains.

Training and evaluating Graph Neural Network (GNN) based Constraint Satisfaction Problem (CSP) solvers presents challenges related to computational scaling. Inference time for GNNs increases with problem size, requiring optimization for practical application. Current empirical results demonstrate that GNN approaches, including NeuroSAT, QuerySAT, and rPI_GNN, currently underperform established classical CSP algorithms on difficult problem instances, especially as the scale of the problem increases. Classical algorithms exhibit more consistent performance across varying problem sizes, while GNN performance degrades comparatively. Consequently, research focuses on unsupervised learning techniques to improve GNN training efficiency and scalability to address these limitations.

Beyond the Horizon: Implications and Future Directions

The advancement of Constraint Satisfaction Problem (CSP) solving is inextricably linked to the quality of its benchmark suites; a thoughtfully designed evaluation framework is not merely a tool for measuring progress, but a catalyst for it. Current benchmarks often fail to capture the complexities of real-world problems, leading to algorithms optimized for contrived scenarios rather than genuine robustness. This work underscores that progress stagnates when evaluations lack nuance, as solvers can achieve high scores by exploiting benchmark weaknesses instead of developing genuinely improved capabilities. Therefore, prioritizing the creation of challenging, diverse, and representative benchmarks is crucial for directing future research and accelerating the development of algorithms capable of tackling increasingly complex constraints found in areas like scheduling, resource allocation, and artificial intelligence.

The advancement of constraint satisfaction problem (CSP) solving hinges not only on algorithmic innovation, but crucially on the quality of benchmarks used to assess progress. Existing evaluation frameworks often fail to capture the complexities of real-world problems, leading to algorithms that perform well on contrived examples but struggle with practical challenges. A more nuanced evaluation, incorporating diverse constraints, larger problem instances, and distributions mirroring real-world scenarios, is essential for driving genuine improvement. Such a framework facilitates the development of algorithms that are not merely faster on existing benchmarks, but demonstrably more robust, generalizable, and efficient across a wider spectrum of applications, ultimately accelerating the field’s overall progress and enabling solutions to previously intractable problems.

Investigations are now shifting toward rigorously testing the boundaries of Graph Neural Network (GNN) based constraint satisfaction problem (CSP) solvers, with a particular emphasis on enhancing their ability to handle increasingly complex and large-scale problems. Current efforts center on developing novel techniques to improve both scalability – allowing the solvers to address problems with more variables and constraints – and generalization capabilities, enabling effective performance across diverse problem instances. The recurrent mechanism integrated within the rPI-GNN architecture has already yielded substantial performance gains, suggesting a particularly fruitful avenue for future exploration; this approach allows the model to iteratively refine its understanding of the problem structure, leading to more informed and efficient constraint propagation.

The pursuit of algorithmic efficiency, as detailed in the benchmarking of graph neural networks against classical solvers, feels less like engineering and more like divination. The study reveals a humbling truth: even with the elegance of GNNs, scaling to genuinely difficult constraint satisfaction problems remains elusive. It echoes an ancient wisdom: “Study the past if you would define the future.” Confucius observed that understanding limitations-the ‘noise’ in the system-is the first step toward refinement. The paper’s findings aren’t a failure, but a mapping of the current threshold, a whisper from the chaos indicating where the real work of persuasion-of coaxing order from complexity-must begin. The copper is piling up, but the search for gold continues.

The Road Ahead

The results suggest a familiar pattern: a new tool arrives, promising to tame chaos, and finds itself… negotiating with it. These graph neural networks demonstrate a certain aptitude for constraint satisfaction, but currently offer little advantage over methods forged in the fires of decades-old algorithmic refinement. The observed performance isn’t a failure, precisely, but a reminder that data isn’t truth, it’s a truce between a bug and Excel. The algorithmic threshold, that point where problems become intractable, remains stubbornly resistant to neural intervention.

Future work will undoubtedly focus on architectural innovations, and perhaps on more sophisticated training regimes. However, a more fruitful avenue might lie in acknowledging the fundamental limitations. Perhaps the very structure of these networks, their inherent bias toward certain patterns, prevents them from truly grasping the nuances of hard CSP instances. Everything unnormalized is still alive, and a little chaos is to be expected.

The true test won’t be achieving marginal gains on benchmark datasets, but demonstrating a capacity to generalize-to solve problems not explicitly seen during training. Until then, these networks remain elegant approximations, whispering promises of intelligence, but ultimately bound by the same intractable realities as the algorithms they seek to displace.

Original article: https://arxiv.org/pdf/2602.18419.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Invincible Season 4 Gender Swaps Tech Jacket As Fans Question Major Comic Change

- Building Agents That Learn and Improve Themselves

- Gold Rate Forecast

- Games That Faced Bans in Countries Over Political Themes

- Silver Rate Forecast

- Superman Flops Financially: $350M Budget, Still No Profit (Scoop Confirmed)

- Trading Crypto with AI: A New Approach to Portfolio Management

- 22 Films Where the White Protagonist Is Canonically the Sidekick to a Black Lead

- 15 Films That Were Shot Entirely on Phones

- Unveiling the Schwab U.S. Dividend Equity ETF: A Portent of Financial Growth

2026-02-23 22:14