Thinking Through Actions: Language Models Tackle Complex Decision-Making

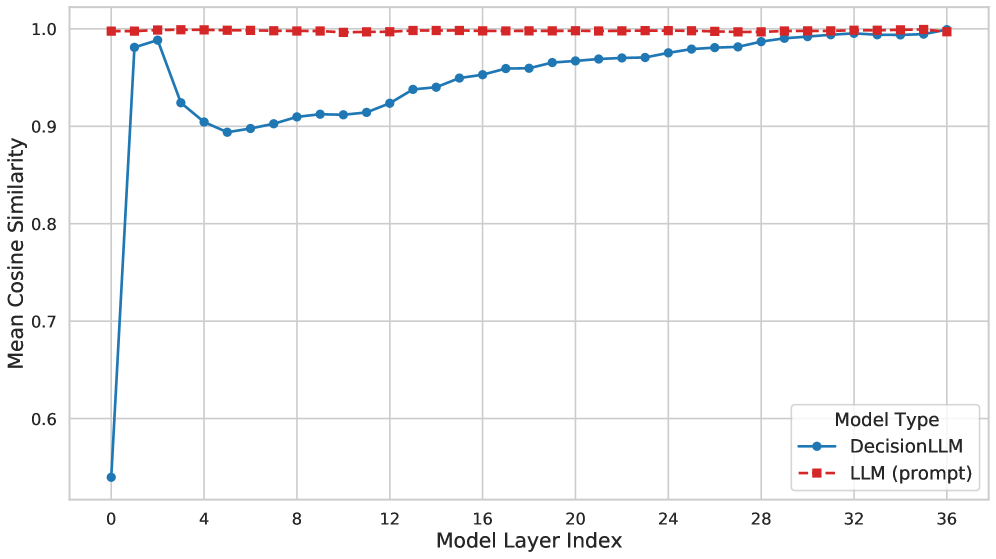

Researchers are exploring how large language models can move beyond text generation to effectively plan and execute sequences of actions in challenging, long-horizon tasks.

Researchers are exploring how large language models can move beyond text generation to effectively plan and execute sequences of actions in challenging, long-horizon tasks.

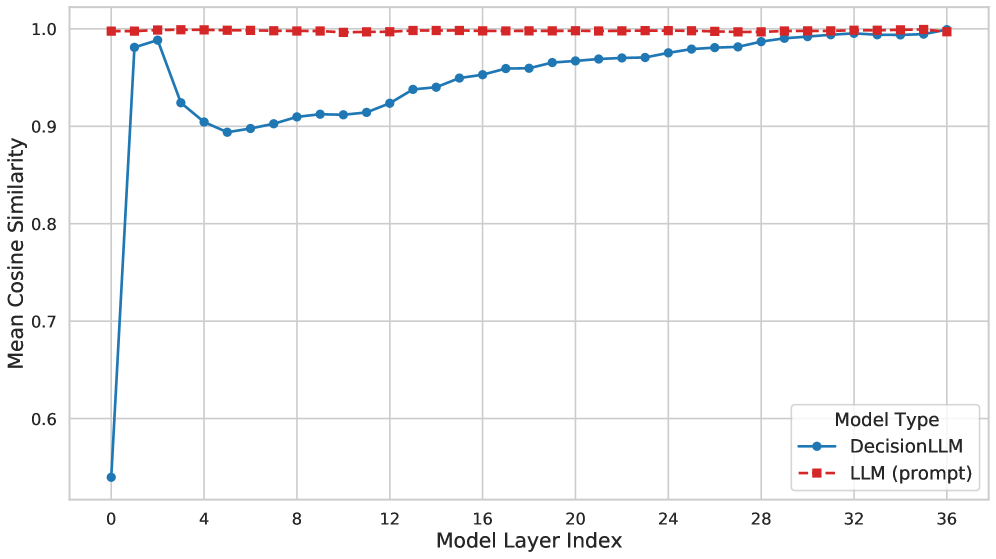

New research moves past simply detecting errors in large language models to diagnose why they occur and automatically generate training data to improve factual accuracy.

The current machine learning ecosystem is built on an unsustainable foundation of inequitable data access, demanding a new approach to value sharing.

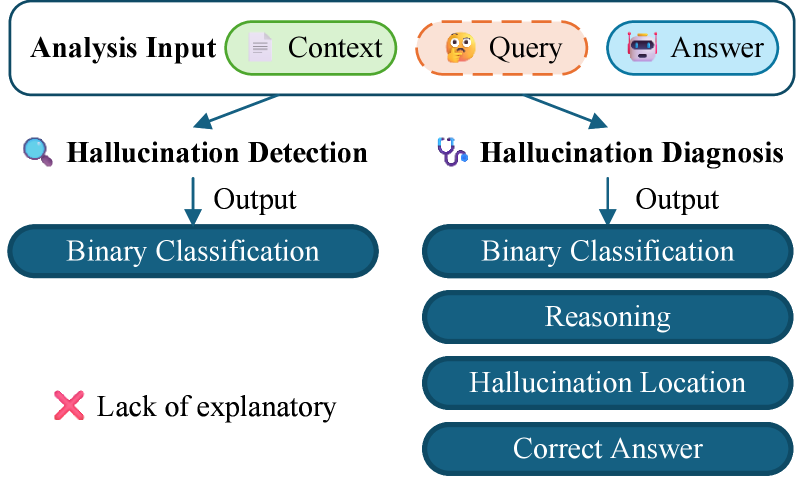

Researchers have developed a novel graph foundation model that excels at identifying anomalous groups within complex network data, even with limited examples.

![The system trains a planner and task model iteratively, using the task model’s validation loss to guide the process-indicated by sequential steps (1-3)-while updating parameters-highlighted by a fire icon-and leveraging a copied parameter set [latex]f\_{\theta^{{}^{\prime}}}[/latex] derived from the original [latex]f\_{\theta}[/latex].](https://arxiv.org/html/2601.10143v1/x9.png)

A new system dynamically adjusts data augmentation techniques based on real-time performance, improving the resilience and accuracy of financial time-series predictions.

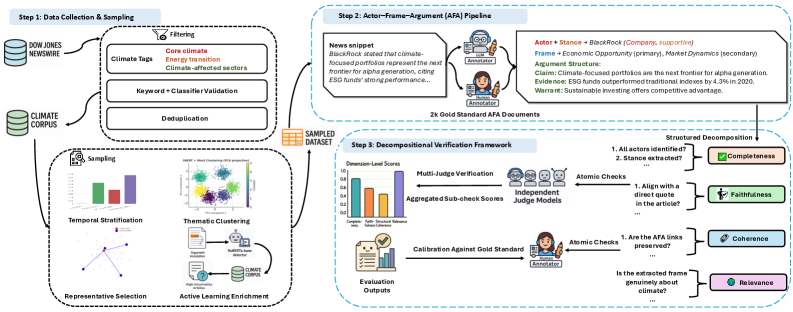

A new computational analysis of two decades of financial reporting reveals a strategic evolution in how climate change is framed, driven by key actors and their arguments.

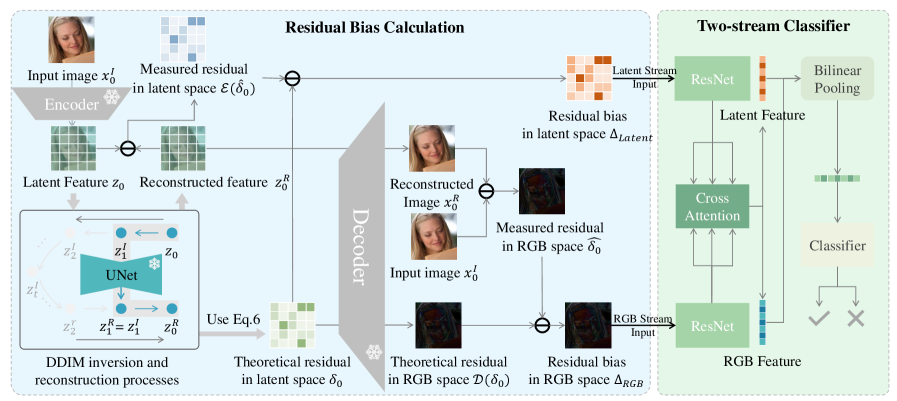

Researchers have developed a novel reconstruction-based method to reliably detect deepfakes and other manipulated images, even when facing unseen generation techniques.

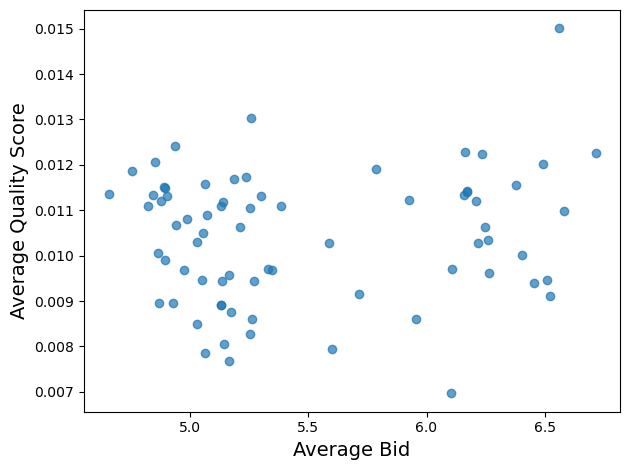

New research details an auction mechanism that leverages user data to significantly increase revenue for digital publishers.

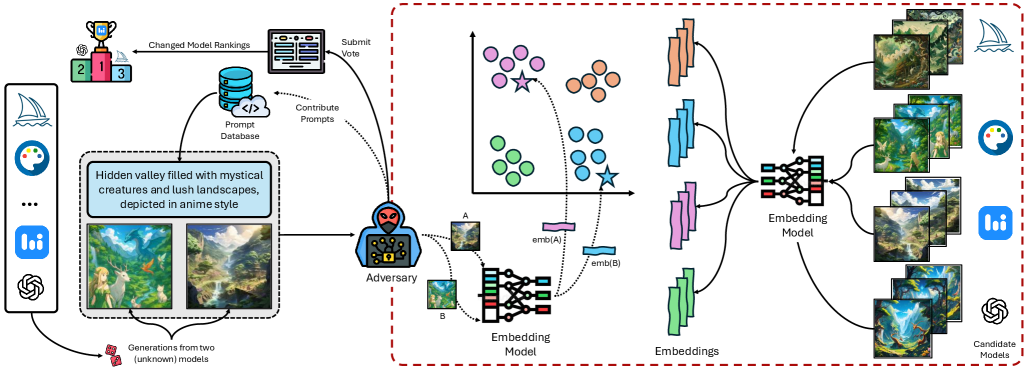

A new study reveals that text-to-image models leave subtle fingerprints in their creations, allowing researchers to reliably identify them even when prompts are unknown.

![The function [latex] g_{\alpha}(x,r) = \ln_{1/\alpha} r(x) [/latex] defines a relationship between a variable <i>r</i> and <i>x</i>, scaled by a parameter α, establishing a logarithmic connection crucial for characterizing the system’s behavior.](https://arxiv.org/html/2601.09406v1/x1.png)

A new framework clarifies the meaning of alpha-mutual information by connecting it to the fundamental concept of information leakage.