Planning’s Peril: Why Model-Based RL Struggles to Find the Right Path

Despite the promise of efficient learning, model-based reinforcement learning often falters due to unexpected challenges in its planning process.

Despite the promise of efficient learning, model-based reinforcement learning often falters due to unexpected challenges in its planning process.

![The study demonstrates a clear disparity in predictive power: while frequency parameters [latex] (f_{1}, f_{2}, a_{1}, a_{2}) [/latex] are reliably forecast with high accuracy ([latex] R^{2} > 0.92 [/latex]), amplitude prediction remains considerably weaker ([latex] R^{2} \approx 0.14 [/latex]), thus validating the chosen decoder architecture which separates frequency prediction via a filter bank from dedicated amplitude network learning-a necessary decoupling given the model’s difficulty in simultaneously extracting all parameters from its weights.](https://arxiv.org/html/2601.21731v1/x10.png)

Researchers have developed a new method to extract meaningful spectral representations from neural networks, moving beyond ‘black box’ predictions to reveal the underlying mechanisms.

New research reveals that even traditional machine learning methods are susceptible to adversarial attacks originating from deep neural networks, debunking the notion that simpler models are inherently secure.

Researchers are harnessing the power of theoretical physics and advanced flow models to build more robust and geometrically informed data generation systems.

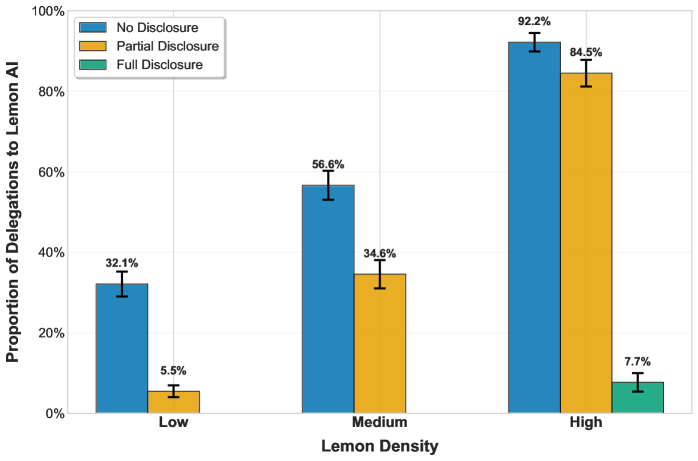

New research reveals that simply knowing what an AI can actually do isn’t enough to guarantee its successful adoption, and can even create new challenges for users.

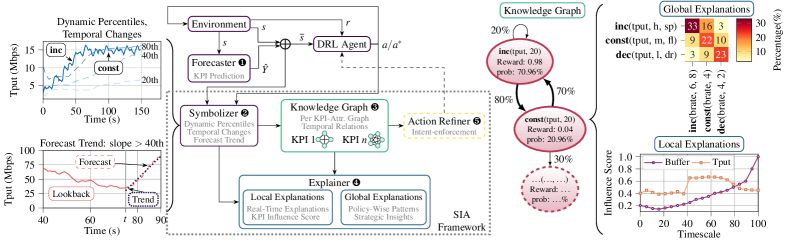

A new framework combines symbolic reasoning with deep reinforcement learning to provide real-time, understandable insights into how AI agents manage complex networks.

As artificial intelligence becomes increasingly adept at generating content, we’re facing a new era of scaled misinformation that threatens the foundations of trust online.

![The AC2L-GAD pipeline establishes a framework for anomaly detection through active node selection, constructing both anomaly-preserving counterfactual positives and normalized negatives, and then encoding these original and augmented views with a shared Graph Convolutional Network [latex]GCN[/latex]; a subsequent contrastive objective, enhanced with uniformity regularization, shapes the resulting embedding space to facilitate the derivation of robust anomaly scores.](https://arxiv.org/html/2601.21171v1/x1.png)

Researchers have developed a novel framework that leverages active learning and counterfactual reasoning to dramatically improve the identification of anomalous nodes and edges within complex graph structures.

New research suggests a surprisingly simple way to regulate algorithmic pricing and prevent collusion: by ensuring learning algorithms minimize ‘swap regret’.

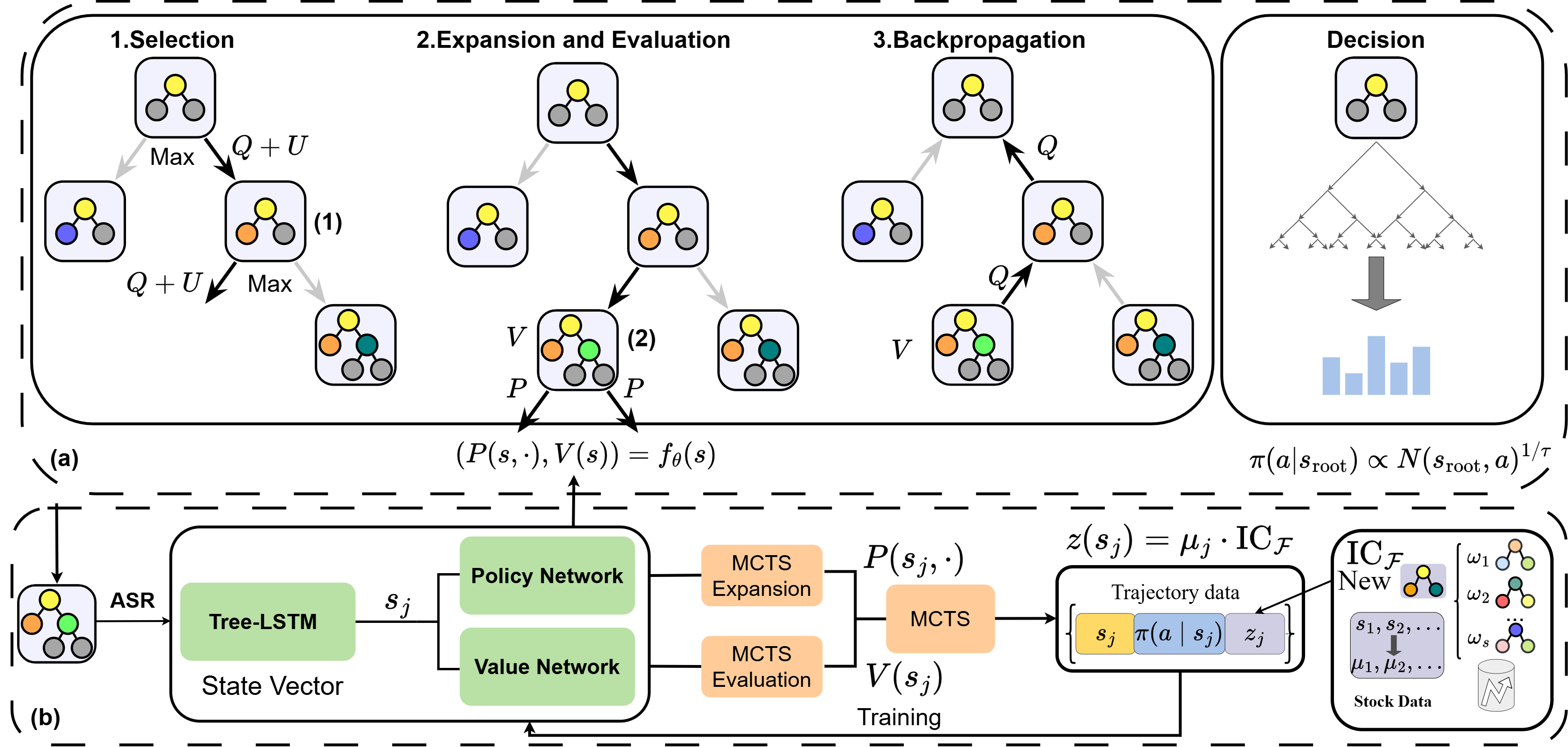

A new framework combines the power of artificial intelligence and linguistic structure to automatically discover and refine investment strategies.