Bridging the AI Security Gap: What Industry Knows That Academia Doesn’t

![Machine learning frameworks exhibit vulnerabilities stemming from diverse sources, including adversarial attacks targeting model robustness - often formulated as finding perturbations δ such that [latex] f(x + \delta) \neq f(x) [/latex] - and data poisoning which compromises training data integrity, alongside issues related to model privacy and security concerning sensitive information leakage or manipulation.](https://arxiv.org/html/2602.04753v1/img/study1codebook1.png)

New research reveals a disconnect between how adversarial machine learning threats are understood and addressed in industry versus academic settings.

![Machine learning frameworks exhibit vulnerabilities stemming from diverse sources, including adversarial attacks targeting model robustness - often formulated as finding perturbations δ such that [latex] f(x + \delta) \neq f(x) [/latex] - and data poisoning which compromises training data integrity, alongside issues related to model privacy and security concerning sensitive information leakage or manipulation.](https://arxiv.org/html/2602.04753v1/img/study1codebook1.png)

New research reveals a disconnect between how adversarial machine learning threats are understood and addressed in industry versus academic settings.

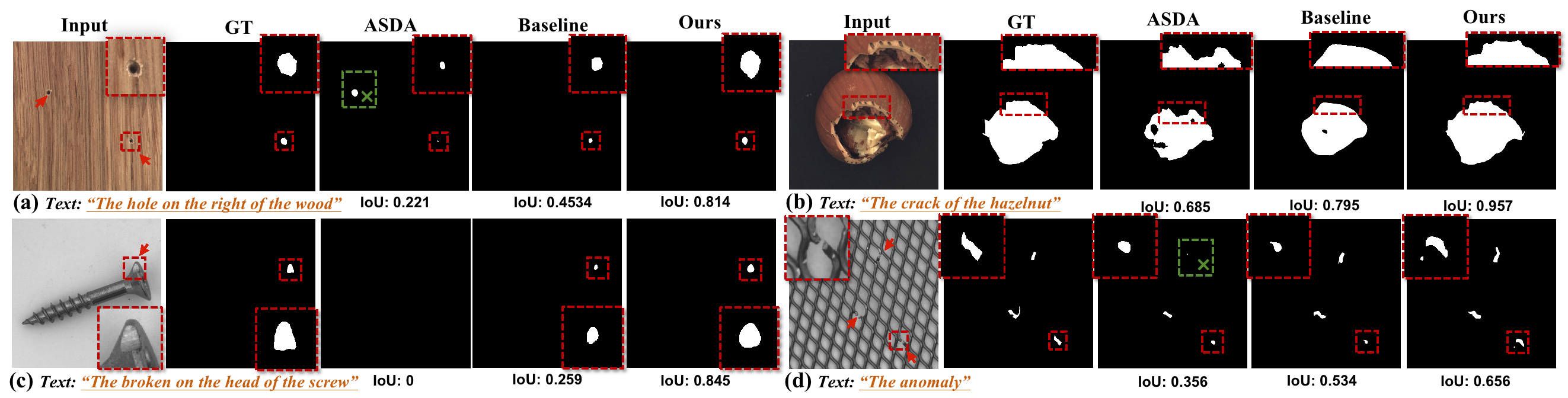

A new approach uses natural language descriptions to help artificial intelligence pinpoint and understand anomalies in industrial images, improving defect detection and localization.

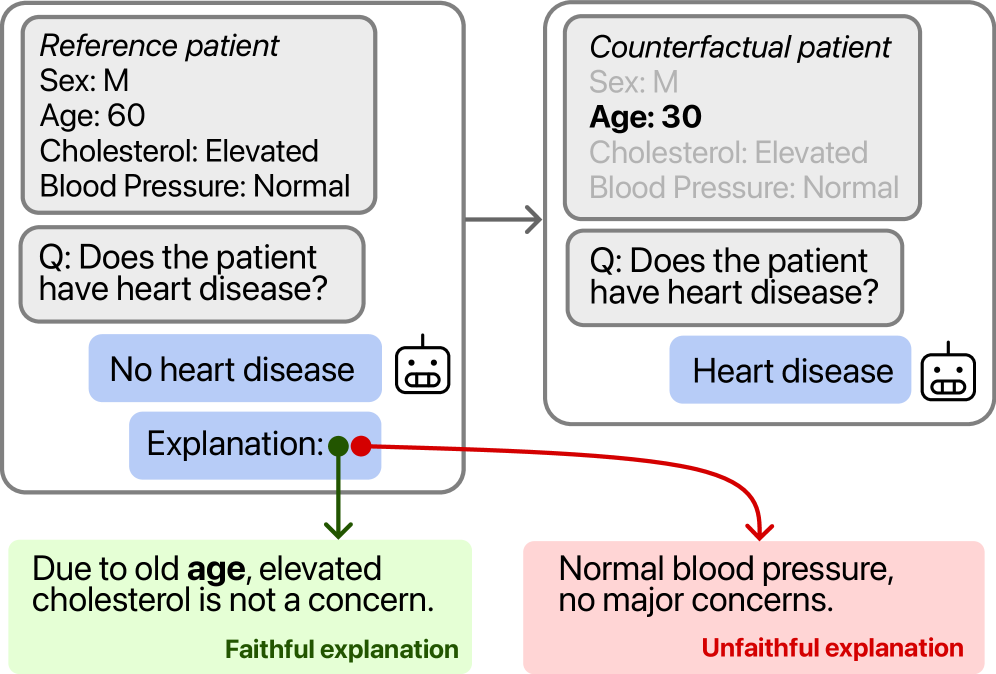

New research suggests that large language models are surprisingly capable of articulating the reasoning behind their outputs, offering a path towards more trustworthy artificial intelligence.

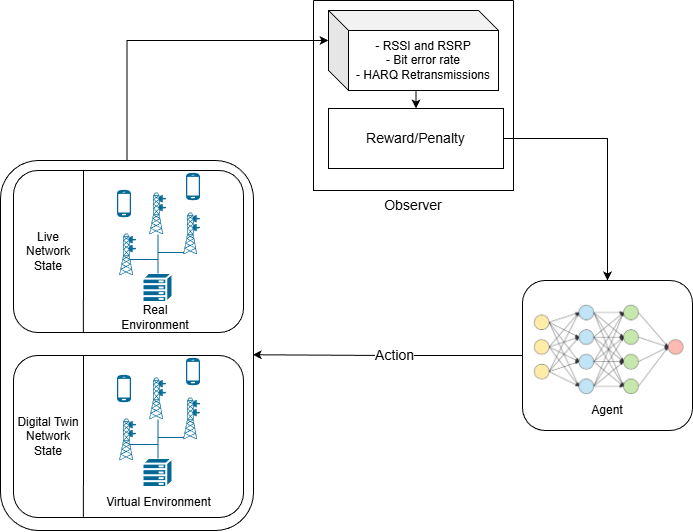

Artificial intelligence is rapidly transforming cellular infrastructure, promising more resilient, efficient, and accessible networks for all.

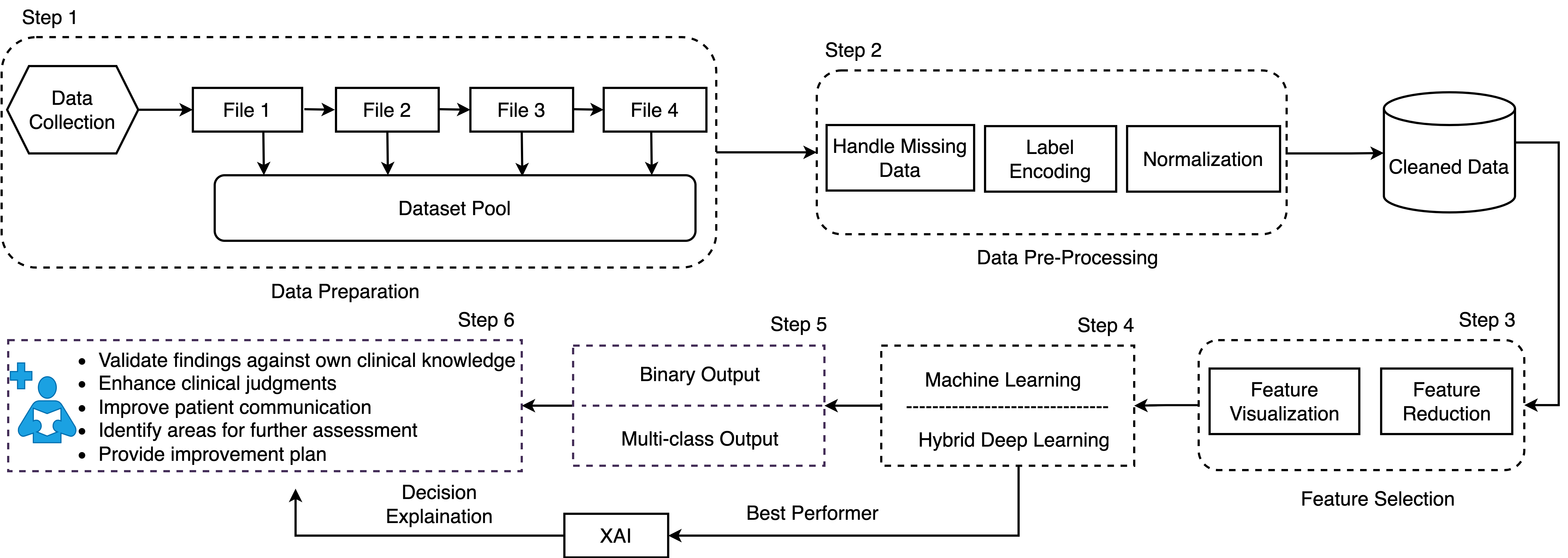

Researchers are leveraging the power of artificial intelligence to not only improve the accuracy of ADHD detection, but also to provide clinicians with deeper insights into the factors driving those diagnoses.

Researchers have developed a framework that combines the strengths of deep learning with the formal rigor of temporal logic to enhance performance in tasks requiring sequential decision-making.

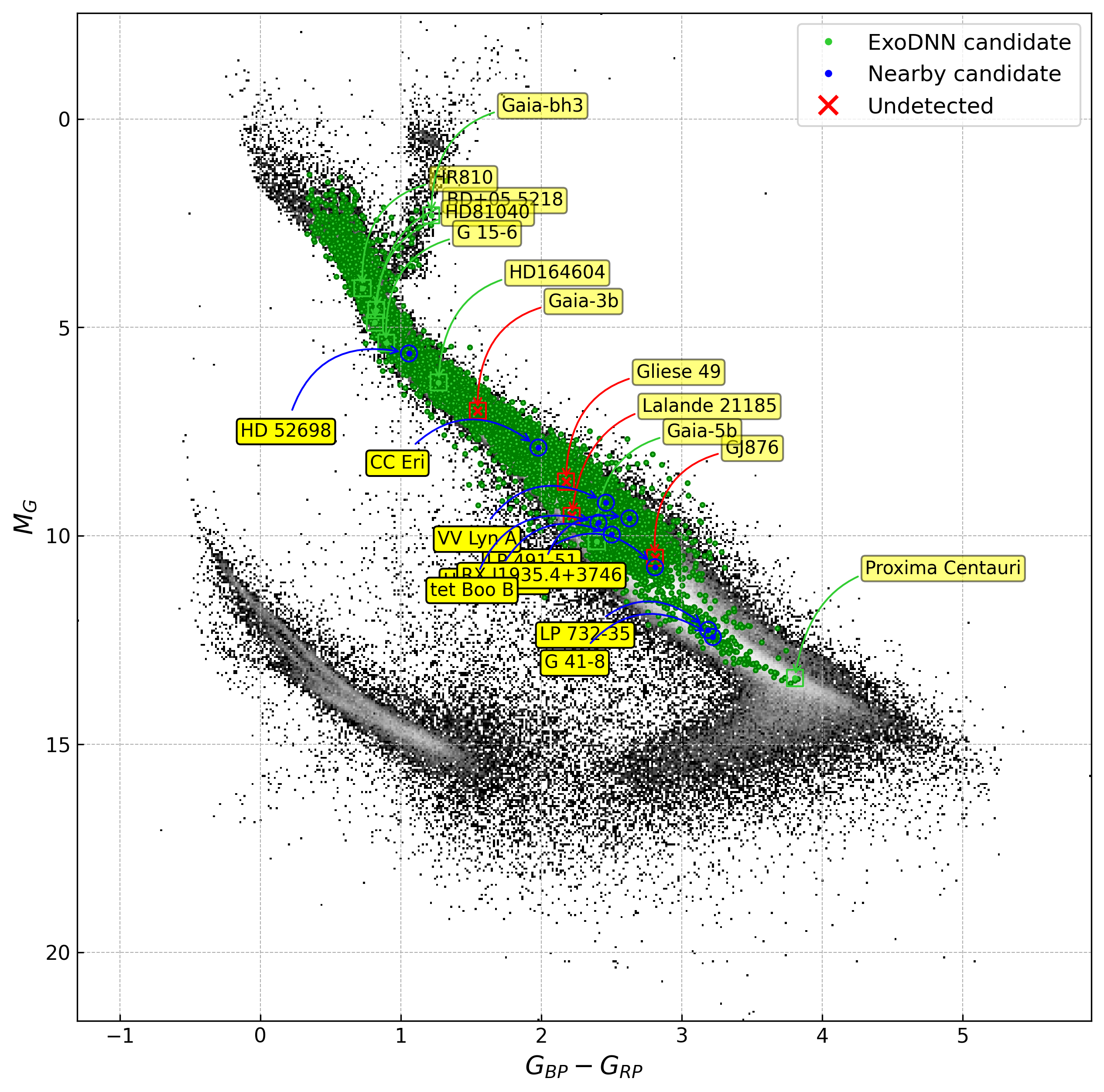

A novel deep learning model is enhancing the search for exoplanets and stellar companions within the vast dataset of the Gaia mission.

![Community-centric contrastive pre-training on multi-dimensional topic data reveals that discussions coalesce not primarily by temporal proximity, but rather by shared political alignment and enduring thematic concerns, suggesting that online discourse exhibits patterns of decay and reformation driven by ideological resonance rather than chronological order-a phenomenon discernible through the separation of clusters in the embedding space [latex] \mathbb{R}^n [/latex].](https://arxiv.org/html/2602.02525v1/x1.png)

A new approach to pre-training discussion models focuses on understanding community norms to improve performance and overcome data limitations in social media analysis.

![The study demonstrates that mechanistic forecasting-using [latex]\Psi_{g}^{latent}[/latex]-and probability-based preference distributions-using [latex]\Psi_{g}^{prob}[/latex]-yield varying win-rates across different countries when benchmarked against survey data represented by [latex]\Psi_{g}^{survey}[/latex], suggesting the performance of preference modeling is geographically sensitive.](https://arxiv.org/html/2602.02882v1/00_Figures/ICML/global_win_rate_key_by_model_country_wins_only.png)

New research shows that analyzing the internal workings of large language models can offer a more nuanced understanding of population-level preferences than simply looking at their outputs.

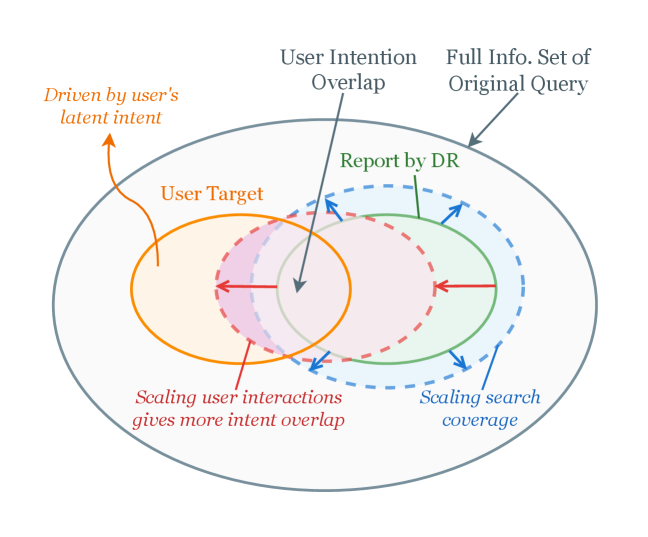

A new framework trains agents to ask clarifying questions before conducting deep research, significantly improving the quality and relevance of generated reports.