Author: Denis Avetisyan

A new approach, Aletheia, delivers a faster, more scalable solution for a key challenge in tensor decomposition by autonomously tackling the FirstProof problem.

This work introduces an efficient iterative preconditioned conjugate gradient solver for RKHS-constrained CP decomposition, achieving complexity independent of dataset size through innovative algebraic manipulation and tensor decomposition techniques.

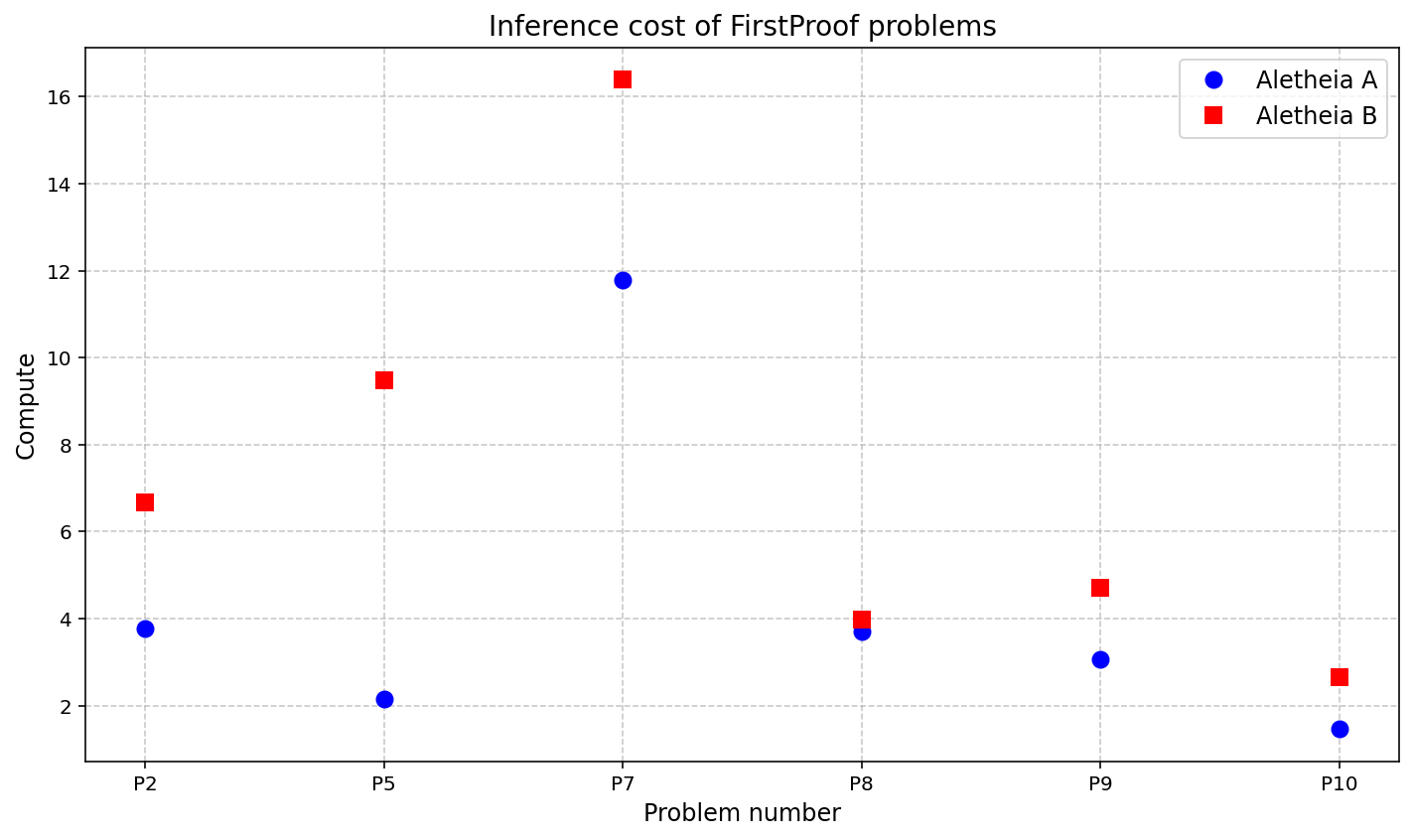

Automated reasoning in mathematics remains a significant challenge, often requiring substantial human intervention. This is addressed in ‘Aletheia tackles FirstProof autonomously’, which details the performance of a mathematics research agent-powered by Gemini 3 Deep Think-on the inaugural FirstProof challenge. Aletheia autonomously solved six of the ten presented problems, demonstrating a capacity for independent mathematical problem-solving and proof generation. Given these initial results, how can we further refine these agents to achieve even greater levels of mathematical proficiency and tackle increasingly complex problems?

Deconstructing Complexity: A Tensor’s Journey Through RKHS Constraints

Many data analysis challenges involve understanding complex, multi-dimensional datasets – often represented as tensors. A powerful technique for simplifying these tensors is Canonical Polyadic (CP) decomposition, which breaks down a tensor into a sum of simpler tensors, revealing underlying structures and relationships. This process isn’t always straightforward, however, frequently requiring the solution of a series of interconnected subproblems, one for each ‘mode’ or dimension of the original tensor. Specifically, a mode-k subproblem focuses on optimizing one set of factors while holding the others fixed, effectively reducing the dimensionality and computational burden to make the decomposition tractable. Solving these subproblems efficiently is crucial for scaling CP decomposition to large, real-world datasets, enabling insights from areas as diverse as recommender systems and medical imaging.

The process of decomposing a tensor into its constituent parts, known as Canonical Polyadic (CP) decomposition, frequently requires constraints to guarantee a meaningful and valid solution. These constraints are often implemented using Reproducing Kernel Hilbert Spaces (RKHS), a powerful mathematical tool that defines a space of functions based on kernel functions. By enforcing solutions to lie within an RKHS, the decomposition isn’t simply finding any set of factors, but rather those that exhibit desired smoothness or regularity properties. This is particularly crucial in applications like data analysis where the decomposed components represent underlying patterns or features; without RKHS constraints, these features might be noisy, unrealistic, or fail to generalize effectively. The use of RKHS, therefore, shifts the decomposition from a purely algebraic problem to one deeply rooted in functional analysis, ensuring the extracted components are not only mathematically sound but also interpretable and robust.

Existing techniques for solving the mode-k subproblem in Canonical Polyadic (CP) decomposition often falter when Reproducing Kernel Hilbert Space (RKHS) constraints are applied. These constraints, while crucial for ensuring meaningful and valid solutions, introduce a significant computational burden due to the need for kernel evaluations and optimization over function spaces. The complexity scales rapidly with the size of the dataset and the dimensionality of the RKHS, rendering traditional iterative methods, such as gradient descent, prohibitively slow for large-scale applications. Consequently, researchers face challenges in applying CP decomposition with RKHS constraints to complex datasets, limiting its practical utility in fields like recommender systems, image processing, and psychometrics where scalability is paramount.

Accelerating the Inevitable: The Art of Preconditioning

The linear systems resulting from the discretization of the governing equations are solved using an iterative approach, specifically the preconditioned conjugate gradient method. This method offers computational advantages over direct solvers, particularly for large-scale problems, by reducing the number of iterations required for convergence. The conjugate gradient method iteratively refines an initial guess for the solution vector by successively searching for the minimum of the residual, and preconditioning is employed to improve the spectral properties of the system, leading to faster convergence rates. The efficiency of this iterative process is dependent on the condition number of the preconditioned matrix; lower condition numbers generally result in fewer iterations and faster solution times.

Preconditioning techniques are employed to enhance the convergence rate of iterative solvers by transforming the original linear system into an equivalent system that is easier to solve. This is achieved by multiplying the original system by a preconditioning matrix, M, resulting in a preconditioned system M^{-1}A x = M^{-1}b. The effectiveness of preconditioning is directly related to the condition number of the preconditioned matrix M^{-1}A; a lower condition number indicates a better-conditioned system and, consequently, faster convergence of the iterative solver. Ideally, a perfect preconditioner would result in a condition number of 1, but in practice, the goal is to significantly reduce the condition number compared to the original matrix A. The selection of an appropriate preconditioning technique depends heavily on the properties of the matrix A and the computational cost associated with applying the preconditioner.

The computational cost of matrix-vector products significantly impacts the efficiency of iterative solvers and preconditioning routines. Each iteration of a preconditioned conjugate gradient method, and many preconditioning techniques themselves, require multiple applications of the system matrix to a vector. Consequently, the time required for these matrix-vector products often dominates the overall solution time. Optimizations such as sparse matrix storage formats, efficient data access patterns, and the utilization of optimized linear algebra libraries are critical for minimizing this cost and achieving acceptable performance, particularly for large-scale problems. The complexity of a typical matrix-vector multiplication is O(N), where N is the number of non-zero elements in the matrix, making it a primary target for performance improvement.

Beyond the Block Jacobi: A Novel Kernel Preconditioner

Prior to the development of the inverse-free kernel preconditioner, established iterative refinement techniques were investigated and adapted for this problem. The block-Jacobi method, a common choice for preconditioning linear systems, was initially explored as a baseline. Modifications were necessary to accommodate the specific structure of the arising kernel matrices and to optimize performance within the RKHS framework. This involved careful consideration of block sizes and ordering to minimize fill-in during decomposition and to maximize parallelization. While the extended block-Jacobi method provided a functional preconditioner, its performance was ultimately limited by the computational cost of factorization and the condition number of the preconditioned system, motivating the development of the more efficient inverse-free approach.

The introduced kernel preconditioner avoids the computational bottleneck of explicitly forming and inverting the kernel matrix, a process with O(n^3) complexity for an n \times n matrix. Instead, this method employs iterative techniques to implicitly apply the inverse of the kernel operator without ever materializing the full kernel matrix. This approach reduces the computational cost from cubic to approximately linearithmic, scaling as O(n \log n) or O(n) depending on the specific iterative solver and kernel properties. The resulting reduction in computational demand and memory requirements enables the effective solution of significantly larger problem instances compared to methods reliant on direct kernel matrix inversion.

The developed kernel preconditioner exploits the constraints inherent in the Reproducing Kernel Hilbert Space (RKHS) formulation to achieve performance gains over conventional iterative solvers. By directly incorporating RKHS properties into the preconditioning process, the method avoids the computational bottlenecks associated with explicitly forming and inverting the kernel matrix – a significant limitation of standard kernel-based methods when dealing with large datasets. This approach results in substantially faster convergence rates for large-scale problems, as the preconditioning effectively reduces the condition number of the system without the quadratic complexity of full kernel matrix operations. The preconditioner’s efficacy stems from its ability to approximate the inverse of the relevant operator using only kernel evaluations and constraints, thereby scaling more favorably with problem size.

The Long View: Scalability and Computational Complexity

A central component of this research involves a detailed examination of computational complexity, meticulously considering the influence of both dataset size and the dimensionality of the tensors being processed. This analysis isn’t simply about measuring processing time; it’s a fundamental investigation into how the method’s demands on computational resources – memory and processing cycles – change as problem scale increases. Understanding these relationships is crucial for predicting performance on real-world datasets and identifying potential bottlenecks. The work specifically assesses how the algorithm’s efficiency is affected by variations in these parameters, paving the way for optimized implementations and scalable solutions capable of handling increasingly complex challenges in areas like machine learning and data analysis. This detailed analysis informs strategies for resource allocation and algorithm refinement, ensuring practical applicability and long-term viability.

The proposed method exhibits favorable scalability for large-scale problems, achieving a computational complexity of O(n^3r^3). This indicates that the computational cost grows proportionally to the cube of both the problem size, denoted by ‘n’, and a related parameter ‘r’. Crucially, this scaling behavior allows for sustained performance gains as the problem dimensions increase, avoiding the performance bottlenecks often encountered with methods that exhibit higher-order polynomial complexities. The efficiency stems from a design prioritizing operations that minimize computational demands, enabling effective processing of substantial datasets and intricate models without prohibitive resource requirements. This favorable scaling characteristic positions the method as a viable solution for increasingly complex computational challenges.

The method’s efficiency stems from a computational complexity that remains independent of dataset size, N. Instead, the iterative cost is governed by the condition number of the preconditioned matrix – a parameter actively minimized through deliberate preconditioner selection. This strategic approach circumvents computationally expensive kernel inversions, instead prioritizing optimized matrix-vector products to dramatically lessen the overall processing load. Consequently, the presented technique demonstrates a substantial reduction in computational burden when contrasted with conventional methods, offering a pathway to more scalable and efficient solutions for large-scale problems.

The pursuit of efficiency in tensor decomposition, as detailed in this work, echoes a fundamental truth about all systems: they evolve, and optimization is a constant negotiation with time. The method presented, focusing on iterative preconditioning to bypass dataset size limitations, isn’t merely a technical achievement; it’s an attempt to sculpt a more graceful decay. As David Hilbert observed, “We must be able to do everything.” This ambition, to overcome limitations and push boundaries, is precisely what drives the development of techniques like RKHS-constrained CP decomposition – a striving for a complete, unburdened solution within the constraints of computational reality. The elegance lies in acknowledging the inevitable march of complexity and building systems resilient enough to withstand it.

The Horizon Recedes

The elegance of Aletheia’s approach – achieving dataset-independent complexity in a notoriously difficult problem – is not, however, a claim to finality. Every efficient solution merely shifts the locus of difficulty. Here, the iterative preconditioning, while potent, relies on a specific algebraic structure within the kernel matrix. The inevitable question becomes: how gracefully does this decomposition degrade when confronted with kernels possessing limited, or intentionally obfuscated, geometric properties? Future work must address the robustness-or lack thereof-in scenarios where the underlying assumptions are subtly, or aggressively, violated.

Furthermore, the current framework centers on the mode-k subproblem. Tensor decomposition, in its essence, is a holistic pursuit. A truly resilient architecture will demand a unified treatment of all modes, acknowledging that improvements in one dimension may introduce unforeseen vulnerabilities in others. Transfer learning, while promising, remains a partial solution-a borrowed elegance, perhaps-until the core decomposition itself is demonstrably invariant to subtle shifts in data provenance.

It is a curious truth that every optimization is, at its heart, a negotiation with decay. Aletheia buys time, reduces computational burden, but does not abolish the fundamental challenge of extracting signal from noise. The longevity of this approach will not be measured in speed, but in its ability to adapt-to age gracefully-as the landscape of kernel methods continues to evolve.

Original article: https://arxiv.org/pdf/2602.21201.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- 2025 Crypto Wallets: Secure, Smart, and Surprisingly Simple!

- Gold Rate Forecast

- Brown Dust 2 Mirror Wars (PvP) Tier List – July 2025

- HSR 3.7 story ending explained: What happened to the Chrysos Heirs?

- Games That Faced Bans in Countries Over Political Themes

- ETH PREDICTION. ETH cryptocurrency

- Gay Actors Who Are Notoriously Private About Their Lives

- Uncovering Hidden Groups: A New Approach to Social Network Analysis

- USD PHP PREDICTION

- The 10 Most Beautiful Women in the World for 2026, According to the Golden Ratio

2026-02-26 05:56