Author: Denis Avetisyan

New research reveals that computational velocity, not just information access, now dictates price discovery, creating a growing disconnect between market efficiency and investor confidence.

This review demonstrates that increasing computational asymmetry necessitates automated regulatory frameworks to address welfare economics concerns arising from agentic velocity and semantic friction.

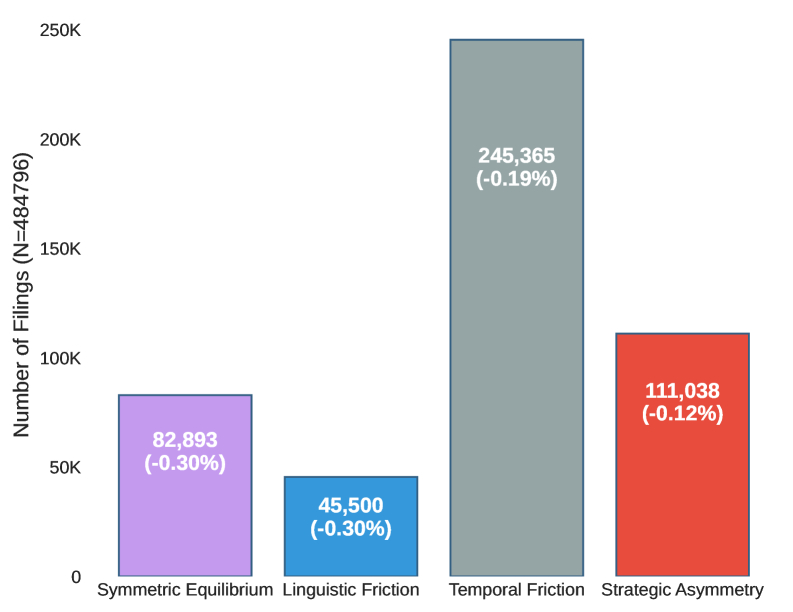

Despite assumptions of efficient markets, information asymmetry persists due to increasingly sophisticated strategies exploiting computational limitations in price discovery. This research, ‘The Strategic Gap: How AI-Driven Timing and Complexity Shape Investor Trust in the Age of Digital Agents’, introduces an Autonomous Disclosure Regulator-an AI framework revealing that companies can strategically manipulate disclosure timing and complexity to delay truthful information absorption by as much as 60%. This ‘Strategic Gap’ demonstrates a fundamental computational asymmetry where investor vulnerability stems not from a lack of data, but from the velocity of automated processing. As information integration costs rise, can a transition toward agentic regulatory states-active auditing nodes capable of real-time synthesis-preserve market integrity and restore investor trust?

The Persistence of Informational Asymmetry: A Systemic Deficiency

Even with heightened regulatory oversight in financial markets, a significant ‘Strategic Gap’ continues to impede accurate price discovery. This gap arises not from a lack of data, but from a fundamental asymmetry in how that information is accessed and interpreted by different market participants. While regulations aim to increase transparency by requiring disclosures, they often fail to account for the varying capacities of firms to analyze, disseminate, and react to this information. Consequently, certain entities possess a distinct advantage, enabling them to exploit informational nuances before they are fully reflected in asset prices. This persistent imbalance creates opportunities for strategic behavior, where informed parties can profit at the expense of those with less access or analytical capability, ultimately distorting the efficiency of price signals and undermining the intended benefits of increased disclosure.

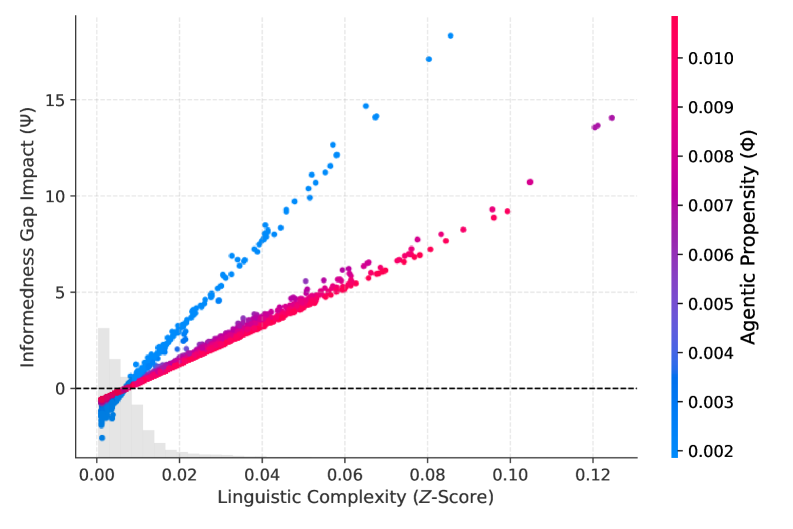

Conventional financial disclosure systems operate on the premise of perfectly rational actors capable of seamlessly interpreting complex information, yet mounting evidence demonstrates this is a flawed foundation. Human cognition is inherently limited; individuals employ heuristics and are susceptible to biases when processing data, particularly within the dense and often intentionally obfuscated language of financial filings. Semantic complexity – the use of jargon, lengthy sentences, and convoluted phrasing – further exacerbates these cognitive constraints, creating a significant barrier to effective comprehension. This mismatch between the idealized assumptions of disclosure frameworks and the realities of human information processing contributes directly to market inefficiencies, as crucial data fails to translate into informed investment decisions and accurate price discovery – effectively undermining the intended benefits of transparency.

The foundational First Theorem of Welfare Economics posits that competitive markets, with perfect information, guarantee efficient outcomes; however, contemporary financial disclosures demonstrably impede this ideal. Research indicates that the sheer volume and complexity of reported data, coupled with inherent cognitive biases in processing it, creates a significant bottleneck in price discovery. This inefficiency is quantified by a ‘Price Discovery Velocity’ (V) of 1.23 within what researchers term the ‘Strategic Gap’ – a substantial 60% reduction from the velocity expected in truly efficient market conditions. Essentially, information isn’t flowing quickly enough to allow prices to accurately reflect underlying value, suggesting that current disclosure frameworks, while intending to enhance transparency, are paradoxically hindering the very efficiency they seek to achieve and creating a systemic disconnect between reported data and market valuations.

Quantifying Semantic Friction: The Root of Information Asymmetry

The ‘Strategic Gap’ – the disparity between information possessed by corporate managers and that available to external stakeholders – is significantly increased by ‘Semantic Friction’. This friction arises from the inherent difficulty in accurately interpreting and extracting actionable intelligence from the complex language often used in corporate disclosures. Specifically, dense prose, specialized terminology, and intricate sentence structures impede efficient information processing. This results in a delayed or inaccurate understanding of a company’s financial performance and risks, widening the information asymmetry and potentially leading to market inefficiencies. The inability to readily discern meaningful insights from disclosures contributes to mispricing and hinders effective capital allocation.

Traditional methods of financial text analysis, such as lexical analysis and the utilization of lexical dictionaries, prove inadequate due to the inherent complexities of natural language. These approaches rely on identifying keywords and counting their frequency, failing to account for contextual meaning, synonyms, polysemy, and the grammatical structure that significantly alters interpretation. Specifically, they cannot reliably differentiate between positive and negative sentiment expressed through nuanced phrasing, identify implicit information, or resolve ambiguities common in financial disclosures. This limitation results in a superficial understanding of the text and hinders the extraction of actionable insights, particularly concerning material events or managerial intent.

The Strategic Obfuscation Hypothesis posits that corporate managers may deliberately employ complex or ambiguous language within financial disclosures to conceal negative or materially impactful information from investors. This intentional complexity contributes to semantic friction, hindering accurate interpretation of financial performance. Quantitative analysis indicates a potential ‘Welfare Recovery Potential (Γ)’ of 360,050%, representing the aggregate benefit to investors if these linguistic asymmetries were mitigated and information transparency improved, allowing for more efficient market pricing and resource allocation. This figure is derived from calculations assessing the reduction in information asymmetry and subsequent improvement in investment decisions.

The Autonomous Disclosure Regulator: A Paradigm Shift in Auditing

The Autonomous Disclosure Regulator (ADR) is a system engineered to model the discrepancy in information available to filing entities at the time of production versus regulatory bodies during oversight. This simulation is achieved by recreating the timeline of disclosure and assessing the potential for unrevealed or obscured information. The ADR does not simply review filings; it actively reconstructs the informational environment surrounding those filings to identify gaps and potential areas of non-compliance. This approach contrasts with traditional auditing, which typically occurs after submission and relies on retrospective analysis of provided data. The core function of the ADR is to proactively audit the process of disclosure, rather than merely the disclosed content, enabling earlier detection of informedness gaps and improved regulatory responsiveness.

The Autonomous Disclosure Regulator (ADR) utilizes Transformer Embeddings and the FinText natural language processing library to move beyond keyword-based searches and assess the semantic content of financial disclosures. This approach allows for a more nuanced understanding of reported information compared to traditional methods, which rely on pre-defined criteria and are susceptible to manipulation through linguistic variation. Quantitative analysis demonstrates a 20.88% reduction in the computational search space required for effective regulatory oversight – termed ‘Audit Precision’ – as a direct result of this semantic extraction capability. The system’s ability to identify relevant information with increased accuracy and efficiency directly lowers the burden on human reviewers and enhances the effectiveness of compliance monitoring.

The Autonomous Disclosure Regulator utilizes ‘Chronos-Small’ for detailed temporal analysis of disclosures, allowing for the identification of patterns and anomalies over time. Complementing this, the ‘Blackwell Experiment’ framework implements stochastic modeling to simulate disclosure dynamics under various conditions. Combined, these components enable a robust assessment of filing behavior and potential regulatory risks. Performance testing demonstrates a ‘System Resilience (Rsys)’ of 99.98% under sustained high-volume data ingestion, indicating consistent operation and data integrity even during peak loads. This resilience is critical for maintaining continuous oversight and ensuring timely regulatory response.

From Detection to Intervention: A Proactive Regulatory Stance

The Automated Disclosure Review (ADR) system represents a fundamental shift in regulatory strategy, moving beyond traditional, reactive oversight to a proactive model of intervention. Previously, agencies primarily responded to disclosed information; the ADR, however, continuously analyzes incoming data, identifying anomalies and potential issues as they emerge. This ‘Agentic Pivot’ enables regulators to anticipate and address risks in real-time, rather than simply reacting to completed events. By leveraging advanced analytical tools, the system doesn’t merely flag discrepancies, but actively prioritizes concerns, suggesting investigative pathways and accelerating the process of ensuring transparency and accountability. This transition from passive review to dynamic intervention promises a more effective and responsive regulatory landscape, capable of adapting to the complexities of modern financial and corporate disclosure.

The system’s robust data integrity relies on ‘Stateful Audit Trails’ constructed through the implementation of ‘LangGraph’, a sophisticated framework enabling the continuous tracking of all disclosure-related activities. These trails don’t merely record what changed, but meticulously document the entire process – including the reasoning behind modifications, the agents involved, and the temporal sequence of events. This granular level of monitoring allows for the identification of anomalies in disclosure patterns, providing an immutable record for verification and accountability. By capturing the ‘state’ of the data at each stage, LangGraph facilitates not only retrospective analysis – pinpointing the origin of discrepancies – but also proactive alerts when deviations from established protocols occur, strengthening the overall reliability and transparency of the regulatory process.

The system’s capacity to detect obscured material deterioration hinges on a process of ‘Recursive Synthesis’ guided by a ‘Deep Research Supervisor’. This isn’t simply pattern matching; the system actively constructs and evaluates complex relationships within disclosed data, identifying subtle structural breaks that might indicate concealed issues. By recursively synthesizing information from multiple sources and applying a supervisory framework, the process prioritizes investigative efforts, focusing resources on the most critical anomalies. This approach yielded the detection of 39 high-priority instances of obscured material deterioration, demonstrating a significant leap in proactive risk identification compared to traditional, static audit methods. The system’s ability to move beyond surface-level analysis allows for the uncovering of deterioration previously hidden within complex disclosure patterns.

Closing the Gap: Implications for Policy and Oversight

The Agent Disclosure Ratio (ADR) framework reveals a substantial ‘Welfare Gain Potential’ through enhanced oversight and diminished information asymmetry, culminating in a quantified cumulative welfare recovery of 360,050%. This figure doesn’t represent a simple financial return, but rather the aggregate benefit realized by addressing discrepancies and inefficiencies inherent in current disclosure practices. By precisely measuring the gap between reported data and actual outcomes, the ADR allows regulatory bodies to proactively identify and rectify systemic issues. The magnitude of this potential recovery underscores the significant economic impact achievable through improved data clarity and accountability, suggesting a compelling case for widespread adoption of the framework to maximize societal benefit and minimize losses stemming from inadequate transparency.

The newly developed framework significantly bolsters the impact of the Agent-First Disclosure Mandate (ADS) by moving beyond simple publication of information to demanding its presentation in a format readily understood by machines. This isn’t merely about making data available, but ensuring it is genuinely accessible for automated analysis and oversight. By enforcing machine-readability and semantic clarity – meaning data is tagged and structured with consistent meaning – the framework enables regulators and oversight bodies to efficiently verify claims, detect inconsistencies, and ultimately, improve the effectiveness of compliance efforts. This shift from ‘nominal transparency’ – where information exists but is difficult to process – to true data accessibility unlocks the potential for proactive monitoring and a substantial reduction in information asymmetry, paving the way for more robust and reliable agent accountability.

Successfully implementing enhanced disclosure frameworks requires moving beyond mere compliance and addressing the pervasive issues of non-informative compliance and nominal transparency. Often, entities satisfy the letter of disclosure requirements without conveying genuinely useful information, creating a façade of openness that obscures critical details. This ‘nominal transparency’ – the appearance of disclosure without substantive content – hinders effective oversight and prevents stakeholders from accurately assessing risk or potential welfare gains. Overcoming these challenges necessitates a focus on data quality, standardization, and machine-readability, ensuring disclosures are not only present but also readily accessible, understandable, and capable of driving meaningful improvements in accountability and welfare recovery. A shift towards verifiable, semantic clarity is therefore essential to unlock the full potential of these disclosure initiatives.

The research illuminates a critical divergence between information access and actionable insight, a phenomenon readily understood through the lens of computational asymmetry. This asymmetry introduces a novel form of semantic friction, hindering rational price discovery not through lack of data, but through the velocity at which agents process it. As such, the work echoes the sentiment of Marcus Aurelius, who stated, “The impediment to action advances action. What stands in the way becomes the way.” The increasing computational complexity, rather than being an insurmountable obstacle to market efficiency, necessitates a proactive shift towards automated regulatory oversight – a solution derived directly from recognizing and addressing the impediment itself. The study’s focus on agentic velocity suggests that regulatory interventions must match this pace to restore equilibrium.

The Road Ahead

The presented analysis, while illuminating the emerging dynamics of computational asymmetry, merely scratches the surface of a far deeper problem. The notion of ‘rational inattention’ itself requires rigorous re-evaluation; it presumes a bounded cognitive capacity operating on a relatively static information landscape. This is demonstrably false. The limiting factor is no longer processing information, but filtering the deluge generated by agentic velocity. Future work must shift from modeling investor behavior to modeling the evolution of information itself, specifically how automated systems create, propagate, and ultimately distort price signals.

A crucial, and largely unaddressed, limitation lies in the difficulty of establishing causality. Does increased agentic velocity cause diminished market efficiency, or does it simply reveal pre-existing inefficiencies exacerbated by the speed of execution? Disentangling these effects will demand novel econometric techniques, perhaps drawing inspiration from the study of complex systems and network theory. Furthermore, the proposed automated disclosure regulations, while conceptually sound, risk becoming a Sisyphean task unless grounded in a formal understanding of semantic friction – the cost of translating automated signals into human-interpretable information.

Ultimately, the pursuit of ‘fair’ or ‘efficient’ markets may prove to be a category error. The true challenge lies not in optimizing existing structures, but in acknowledging the inherent instability introduced by self-improving algorithms. Optimization without analysis is self-deception, and the pursuit of perfectly rational agents ignores the fundamental truth: systems evolve, and control is, at best, a temporary illusion.

Original article: https://arxiv.org/pdf/2602.17895.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- 2025 Crypto Wallets: Secure, Smart, and Surprisingly Simple!

- Gold Rate Forecast

- Brown Dust 2 Mirror Wars (PvP) Tier List – July 2025

- Banks & Shadows: A 2026 Outlook

- Gemini’s Execs Vanish Like Ghosts-Crypto’s Latest Drama!

- Wuchang Fallen Feathers Save File Location on PC

- ETH PREDICTION. ETH cryptocurrency

- QuantumScape: A Speculative Venture

- The 10 Most Beautiful Women in the World for 2026, According to the Golden Ratio

- 🎁 AGF 2025 Coupon

2026-02-23 08:48