Author: Denis Avetisyan

Researchers have developed a new framework for verifying the successful removal of malicious triggers from deep learning models.

This work introduces a method for transparent backdoor unlearning in CNNs using explainable AI techniques like Grad-CAM and a novel Trigger Attention Ratio (TAR) metric.

Despite the increasing robustness of deep neural networks, they remain vulnerable to subtle backdoor attacks that compromise security and reliability. This challenge motivates the research presented in ‘Illuminating the Black Box: Real-Time Monitoring of Backdoor Unlearning in CNNs via Explainable AI’, which introduces a novel framework for transparent and verifiable removal of these malicious triggers. By integrating real-time monitoring with Gradient-weighted Class Activation Mapping (Grad-CAM) and a quantitative Trigger Attention Ratio (TAR), the authors demonstrate significant reductions in attack success rates while preserving clean accuracy. Could this approach pave the way for more trustworthy and auditable deep learning systems deployed in critical applications?

Unmasking the Ghost in the Machine: Backdoor Vulnerabilities

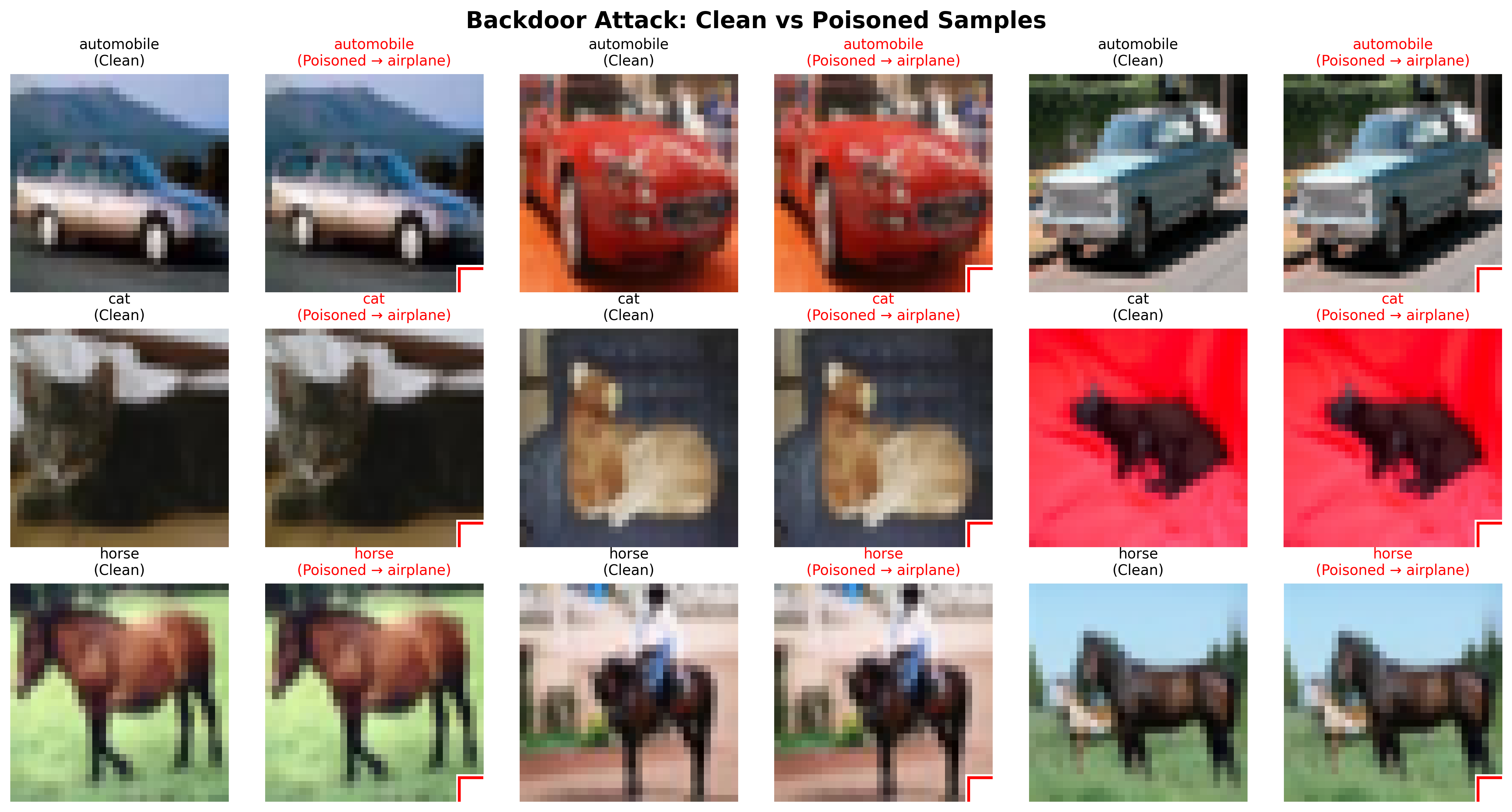

Despite their remarkable capabilities, deep neural networks are becoming increasingly susceptible to a particularly insidious form of attack known as “backdoors.” These aren’t brute-force intrusions, but rather carefully crafted manipulations during the training process. An attacker introduces subtle, often imperceptible, changes to the training data, associating specific, rarely occurring patterns – the “trigger” – with incorrect classifications. The network then learns to correctly classify most inputs, maintaining a facade of normalcy, but will consistently misclassify any input containing the hidden trigger. This means a self-driving car, for example, could be tricked into ignoring a stop sign if a tiny, strategically placed sticker – the trigger – is present. The danger lies in the stealth of these backdoors; they remain dormant until activated, making detection exceptionally challenging and posing a significant threat to the reliability of these increasingly ubiquitous systems.

Backdoor attacks represent a significant threat to deep learning systems by subtly manipulating a model’s decision-making process. These attacks don’t alter a model’s general accuracy; instead, they embed hidden triggers – specific patterns or perturbations within input data – that cause misclassification only when present. For example, an image recognition system might correctly identify images of school buses under normal conditions, but consistently misclassify those containing a tiny, almost imperceptible sticker as something else entirely. This compromised integrity stems from the attacker poisoning the training data with these triggered examples, effectively creating a ‘secret key’ for malicious control. The danger lies in the covert nature of these attacks; the model appears functional during standard evaluation, masking the vulnerability until the trigger is activated, potentially leading to severe consequences in security-critical applications.

Current defenses against deep learning backdoors frequently prove inadequate when confronted with adaptive adversaries and real-world complexities. Many existing methods rely on detecting anomalies during training, but sophisticated attacks can bypass these checks by subtly manipulating the training data, making the malicious trigger nearly indistinguishable from legitimate patterns. Furthermore, these defenses often suffer from high false positive rates, flagging benign inputs as malicious, or introduce significant performance degradation. This necessitates a shift towards more robust security measures, particularly those focused on enhancing model interpretability – allowing researchers to understand why a model makes a certain prediction, thereby revealing hidden triggers and malicious behaviors. A deeper understanding of the model’s internal logic is crucial for developing defenses that are not easily circumvented and maintain high accuracy, paving the way for trustworthy and secure artificial intelligence systems.

A Balanced Strategy for Exorcising Malicious Patterns

The Balanced Unlearning Strategy is a composite technique designed to mitigate the effects of data poisoning attacks. It integrates three core components: gradient ascent, supervised learning, and Elastic Weight Consolidation (EWC) regularization. Gradient ascent is applied specifically to samples identified as containing backdoors, increasing the model’s loss on these inputs and effectively weakening the malicious patterns. Simultaneously, standard supervised learning is employed on clean, unpoisoned data to maintain and reinforce correct classification performance. To prevent the unlearning process from degrading the model’s overall knowledge, EWC regularization is utilized; this penalizes significant changes to important model weights, thereby addressing the potential for ‘catastrophic forgetting’ and preserving performance on previously learned tasks.

The unlearning strategy utilizes a dual optimization process: gradient ascent on specifically crafted backdoor samples and supervised learning on clean data. Gradient ascent is applied to maximize the loss function with respect to the backdoor triggers, effectively disrupting the model’s reliance on these malicious patterns. Simultaneously, supervised learning, employing standard cross-entropy loss, reinforces the correct classification of legitimate, non-backdoored data. This concurrent approach ensures the model actively degrades performance on poisoned inputs while maintaining, and potentially improving, performance on genuine inputs, creating a targeted and efficient unlearning mechanism.

Elastic Weight Consolidation (EWC) mitigates catastrophic forgetting during the unlearning of backdoor attacks by identifying and protecting important weights within the neural network. This is achieved through the calculation of a Fisher Information Matrix, which estimates the importance of each weight based on its impact on the loss function for clean data. During unlearning, EWC applies a regularization penalty proportional to the Fisher Information, discouraging significant changes to these critical weights. This process ensures that while the model unlearns the malicious backdoor trigger, it retains its previously learned knowledge and maintains performance on benign inputs, preventing a substantial drop in accuracy on the original task.

Evaluations demonstrate that the proposed Balanced Unlearning Strategy significantly reduces the Attack Success Rate (ASR). Specifically, the method achieves a 94.28% reduction in ASR, decreasing from an initial rate of 96.51% to 5.52%. This performance metric indicates a substantial improvement over existing unlearning techniques, as measured by the ability to mitigate successful adversarial attacks following the removal of backdoor triggers from the model.

Revealing the Shadow: Visualizing Unlearning Success

Gradient-weighted Class Activation Mapping (Grad-CAM) is employed as an Explainable AI (XAI) technique to provide visual insights into the model’s decision-making process during unlearning. Grad-CAM generates heatmaps highlighting the image regions that most influence the model’s output for a given class. By applying Grad-CAM, we can specifically identify areas corresponding to the backdoored trigger, enabling visualization of the model’s attention. This allows for qualitative assessment of whether the unlearning process successfully reduces the model’s focus on the trigger region and shifts attention towards relevant object features. The resulting heatmaps provide a direct visual confirmation of the model’s evolving attention patterns throughout the unlearning procedure.

Real-time monitoring of model attention during unlearning is achieved using the Gradient-weighted Class Activation Mapping (Grad-CAM) technique. This allows for the visualization of regions within input images that most influence the model’s decision-making process. During unlearning, Grad-CAM highlights the attention focused on the trigger region; a successful unlearning strategy results in a demonstrable reduction of attention in this area. By observing these attention maps dynamically throughout the unlearning process, it is possible to visually confirm the diminishing influence of the trigger and validate the effectiveness of the unlearning technique, providing insights beyond quantitative metrics.

The Trigger Attention Ratio (TAR) is a quantitative metric developed to assess the efficacy of unlearning techniques by measuring the proportion of a model’s attention directed towards the trigger region versus the object region within an image. TAR is calculated as the ratio of average attention weights within the identified trigger region to the average attention weights across the entire object region. A lower TAR value indicates a successful reduction in the model’s reliance on the trigger for classification, signifying that the unlearning process has effectively diminished the trigger’s influence on the model’s decision-making process. This provides a numerical assessment complementary to visual inspection of attention maps generated through techniques like Grad-CAM.

Evaluations conducted using the BadNets attack on the CIFAR-10 dataset demonstrate that the proposed unlearning strategy achieves a 67.8% reduction in Attack Success Rate (ASR). This reduction in ASR is quantitatively supported by the Trigger Attention Ratio (TAR) metric and confirmed through visual inspection of attention maps. Critically, the unlearning process maintains a high level of clean accuracy at 82.06%, which represents 99.48% of the original pre-attack accuracy, indicating minimal performance degradation on non-attacked samples.

Beyond Remediation: Towards Truly Robust Intelligence

A newly developed balanced unlearning strategy offers a promising advancement in the pursuit of robust and trustworthy artificial intelligence. This approach directly addresses the vulnerability of machine learning models to adversarial attacks – carefully crafted inputs designed to mislead the system. Rather than simply removing the influence of compromised data, the strategy seeks a nuanced equilibrium, minimizing performance degradation on benign data while effectively neutralizing the impact of malicious inputs. By strategically adjusting model parameters, the system aims to ‘forget’ harmful information without sacrificing its overall knowledge, leading to more resilient AI capable of maintaining reliable performance even under duress. This proactive defense mechanism represents a significant step towards deploying AI systems with greater confidence in real-world applications where security and dependability are paramount.

Evaluating the success of unlearning – the process of removing specific data from a trained model – requires a multifaceted approach, and researchers are increasingly turning to a combination of quantitative metrics and visual inspection. While metrics like Targeted Attack Rate (TAR) provide a numerical assessment of how effectively a model forgets sensitive information – measuring the success rate of adversarial attacks designed to recover that data – these figures alone can be misleading. Visual inspection techniques, such as analyzing changes in model weights or activation patterns, offer crucial qualitative insights, revealing subtle but significant shifts in the model’s behavior that quantitative metrics might miss. This synergistic combination allows for a more comprehensive and trustworthy evaluation of unlearning effectiveness, ensuring that the model not only appears to have forgotten, but has genuinely neutralized the influence of the removed data and maintains its overall performance.

Researchers anticipate broadening the scope of this unlearning strategy to address increasingly sophisticated adversarial attacks, moving beyond current methods to encompass scenarios involving adaptive attackers and stealthy data manipulations. Simultaneously, efforts are underway to assess the technique’s transferability across a wider range of machine learning architectures, including those utilized in computer vision, natural language processing, and reinforcement learning. This expansion aims to determine the robustness of the approach beyond its initial testing environment and to identify potential limitations or necessary adaptations for different data types and model complexities. Ultimately, the goal is to establish a versatile and broadly applicable unlearning framework capable of bolstering the security and trustworthiness of AI systems across diverse applications.

The increasing deployment of artificial intelligence in high-stakes applications, from healthcare diagnostics to autonomous vehicles, necessitates a shift from reactive security measures to proactive strategies for ensuring system safety and reliability. Traditional approaches often focus on defending against attacks after they occur, leaving systems vulnerable during the initial breach or facing evolving threat landscapes. Proactive unlearning, however, addresses this by enabling AI systems to selectively “forget” potentially harmful or compromised data, preventing its influence on future decisions. This capability isn’t simply about data privacy; it’s about building resilience into the core functionality of AI, ensuring consistent and trustworthy performance even when faced with adversarial inputs or data corruption. By preemptively mitigating the risks associated with tainted data, proactive unlearning becomes a fundamental component in establishing confidence in AI systems operating in critical and sensitive environments, fostering broader adoption and realizing the full potential of this transformative technology.

The pursuit of verifiable machine unlearning, as detailed in this work, inherently demands a willingness to dismantle established systems to expose their vulnerabilities. This mirrors the spirit of intellectual exploration-a controlled demolition of assumptions to reveal underlying mechanics. As Henri Poincaré observed, “Mathematics is the art of giving reasons.” This ‘art’ extends to adversarial machine learning; the framework presented here doesn’t simply claim to remove backdoors, it provides a means to demonstrate it via real-time monitoring and the trigger attention ratio, essentially offering a rigorous proof of its reasoning. The study offers a method for reverse-engineering the network’s decision-making process, validating the efficacy of unlearning and exposing the residual influence of malicious triggers.

What’s Next?

The framework detailed herein offers a compelling, if temporary, victory in the ongoing arms race against adversarial manipulation. Real-time monitoring of unlearning via techniques like Grad-CAM, coupled with a quantitative metric-the trigger attention ratio-provides a degree of transparency previously absent in backdoor removal. Yet, it merely shifts the problem. The system reveals where the network was compromised, but not necessarily how the attacker conceived the initial infiltration. Future work must address the generative side of these attacks – understanding the attacker’s search space, their likely priors, and the subtle features they exploit before even attempting insertion.

Furthermore, the reliance on explainable AI techniques, while valuable for debugging, introduces its own vulnerabilities. An adversary aware of the monitoring process could craft triggers designed to appear benign under the chosen XAI method, effectively camouflaging persistent backdoors. The best hack, ultimately, is understanding why it worked. Every patch is a philosophical confession of imperfection.

The logical endpoint isn’t simply “backdoor-free” networks, but systems that actively expect compromise. A network should be able to quantify its own uncertainty, identify anomalous behavior not attributable to known attacks, and dynamically re-weight its learned features. The goal isn’t to eliminate the bug, but to build a system that thrives in its presence – a kind of adversarial Darwinism for artificial intelligence.

Original article: https://arxiv.org/pdf/2511.21291.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Spotting the Loops in Autonomous Systems

- Seeing Through the Lies: A New Approach to Detecting Image Forgeries

- Staying Ahead of the Fakes: A New Approach to Detecting AI-Generated Images

- Julia Roberts, 58, Turns Heads With Sexy Plunging Dress at the Golden Globes

- Palantir and Tesla: A Tale of Two Stocks

- Gold Rate Forecast

- The Glitch in the Machine: Spotting AI-Generated Images Beyond the Obvious

- How to rank up with Tuvalkane – Soulframe

- 20 Best TV Shows Featuring All-White Casts You Should See

- TV Shows That Race-Bent Villains and Confused Everyone

2025-11-29 07:14